BT_Celt is here to give us a brief intro about data engineering and the modern data architecture design paradigm.

You can find them here on Twitter: @BT_Celt

You can find more of his work here: BT_Celt.substack

Background

A cloud systems architect and developer for a fortune 500 company.

Why Should You Read This?

I architect and implement cloud systems that involve high amounts of data. One specific system I worked on ingests terabytes of data daily, and ultimately flows downstream to data scientists where the magic happens. Additionally, the market for data scientists, data engineers, and general cloud engineers is on fire, with the rest of the tech labor market. Having some knowledge of production systems, experience, or being able to talk about these things will be a plus.

It will be beneficial to look at data architecture from a fundamentals and principles perspective, so that you can have a general idea and apply the principles to the specific use case or company.

What Does a Data Engineer Do

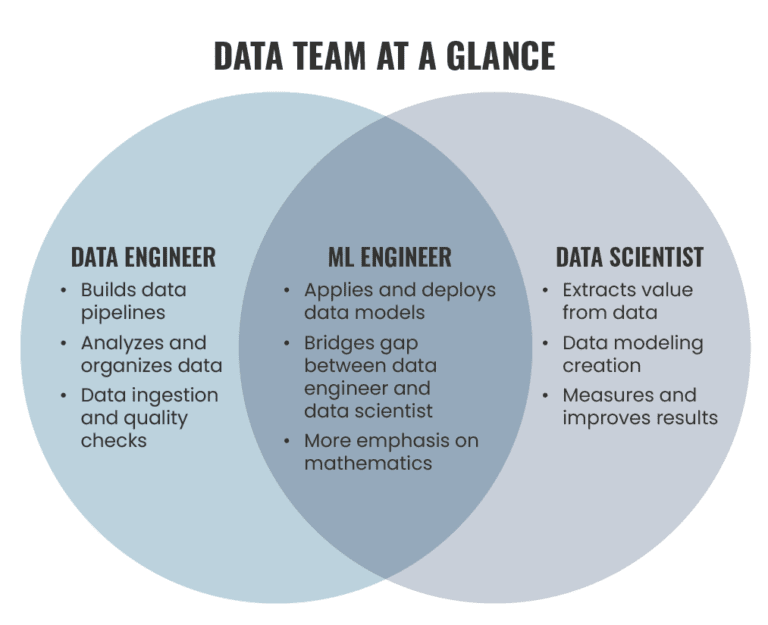

At a high level a data engineer is responsible for getting data from one place to another in a format that can be consumed by the persona. In this case the persona could a data scientist, a developer, a data analyst etc.

Modern Data Architecture

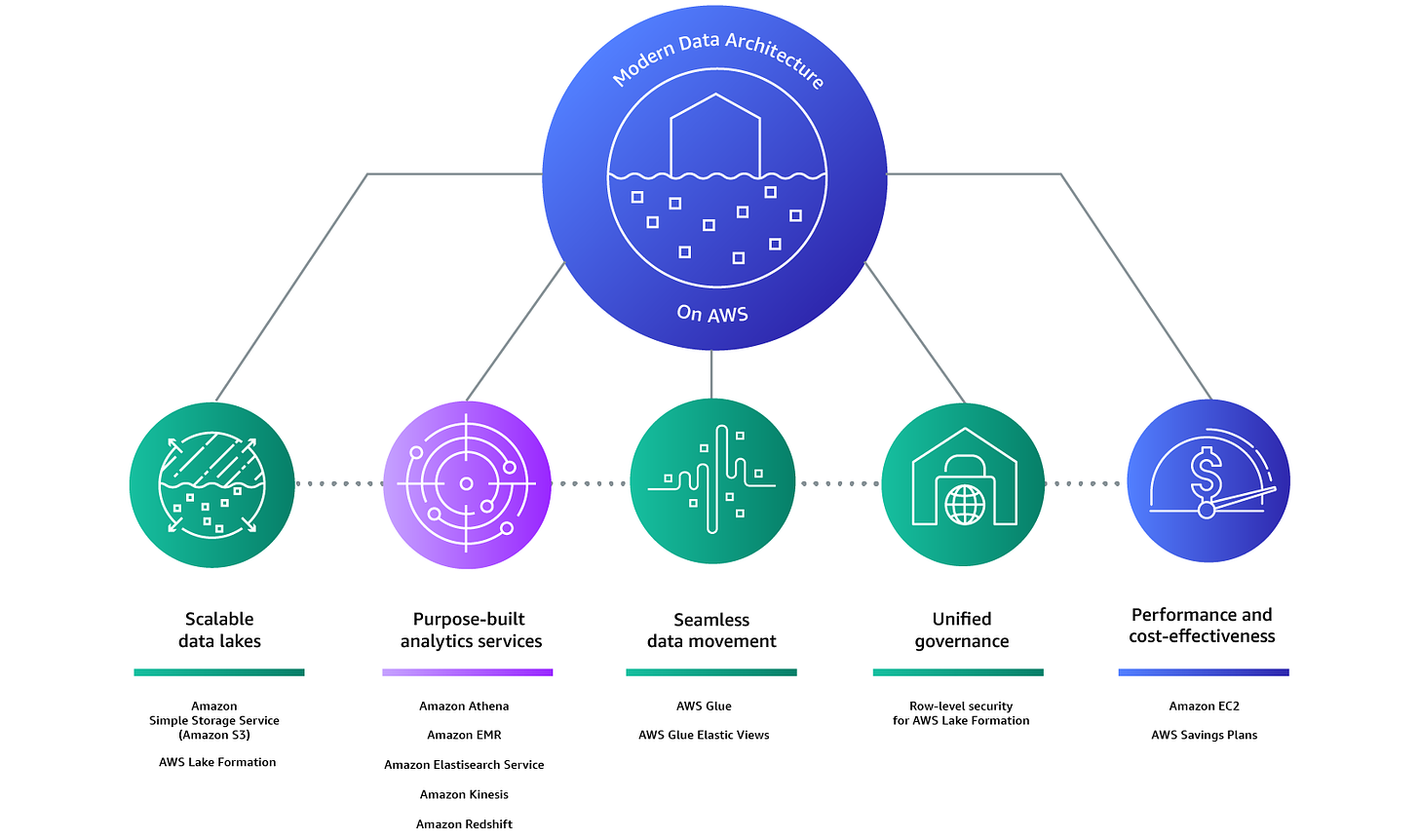

Lets take a look at what AWS has identified as key features of modern data architecture:

• Scalable, performant and cost-effective.

• Purpose-built data services.

• Support for open-data formats.

• Decoupled storage and compute.

• Seamless data movements.

• Support diverse consumption mechanisms.

• Secure and governed.

Scalable, Performant & Cost-Effective.

This should be fairly easily achievable through modern cloud computing. Taking advantage of managed services, pay-as-you-go, and economies of scale result in customer benefits. This is at the core of the argument for cloud computing.

Purpose-Built Data Services.

The need for specific services is rooted in the fact that the number of data producers is increasing, resulting in exponential increase in data. Having one data service for system logs, Internet of Things (IoT), and customer session data creates problems. One advantage of using cloud providers, is that some of these services are already built and optimized for you. for example “Apache Spark on EMR runs 1.7 times faster than standard Apache Spark 3.0, which means petabyte-scale analysis can be run at less than half of the cost of traditional on-premises solutions.”

Support For Open Data Formats

An open data format is essentially the same as an open file format, which pretty much means its an open source and has a permissive license. This is important because we want to our data to be portable and easily consumable. Apache Parquet is becoming very popular in modern data architecture.

Decoupled Storage & Compute.

Decoupling is a theme in cloud architecture where it is beneficial to scale each component as needed. This is beneficial because then customers would pay only for what they use, and compute and storage could scale up and down on-demand. Decoupling storage and compute is harder for on-prem data architecture and data lakes (more on this later) built on Hadoop/HDFS.

Seamless Data Movements.

Data services need to be able to move data in, out, and around the perimeter (more on this to come later). This is part of the core definition of a data engineer, being able to move the data. This is also where your ETL pipelines come into play.

Support Diverse Consumption Mechanisms.

This is related to ‘purpose-built services’ but the idea here is that we want any persona to be able to consume the data. That may mean specific formats, visualizations, exposing APIs, loading the data into a relational database or NoSQL database.

Secure & Governed.

of course security. security has been ‘shifted left’ and is considered a day 0 priority now. Most boards have their mind on security (don’t want to be in the news for a data breach) so this becomes trickles down as a priority. Primarily, for data we care about encryption in transit and at rest both with strong ciphers. Depending on your country, state, and/or industry there also might be more regulations and rules around your firm’s data. Modern cloud providers will provide you with identity access control which can be used for Role Based Access Control (RBAC). Logging and monitoring for compliance/audit reasons also is in this pillar.

TLDR:

We want a data architecture that is scalable while be cost-effective and performant.

Purpose-built data services

Open-data formats like Apache Parquet

Desire to decouple storage and compute and scale each individually.

We want to be able to move data seamlessly and tear down data silos.

Support diverse consumption methods so we can empower each persona

Secure and governed with granular permissions and RBAC

Let’s take a look at implementations of data architecture starting with the Data Warehouse.

The Data Warehouse Paradigm

Data warehouse is not a new concept, and can be considered legacy in certain scenarios.

Here is one definition from Informatica that is simple:

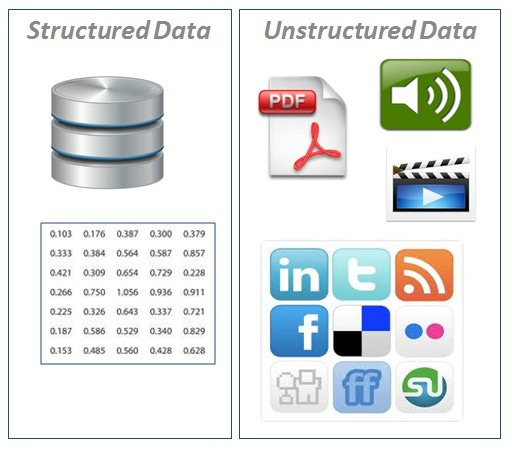

Data warehousing is a technology that aggregates structured data from one or more sources so that it can be compared and analyzed for greater business intelligence.

Key word here is structured data, so there is a lack of flexibility in data warehouses. Data warehouses are schema on write which means the schema is created on write (remember this for later). Only being able to handle structured data in the big data age is a significant issue, as most data (~80-90%) is unstructured. Firms found themselves in the position that they their main data store is not compatible with most of the data coming in.

Here is what Google has to say about data warehouses in Building a Data Lakehouse whitepaper (emphasis mine)

[data warehouses] have proven to be expensive and cannot often address the challenges around data freshness, scaling, and high costs. Also, data warehouses only fit the needs of tabular data, limiting their usability for a rapidly growing variety of data types and structures. Schema was often applied on-write and was driven by a specific analytic use case. This also limited the flexibility of future use of the data for things such as machine learning and advanced analytics.

Note that issues with scaling and high costs are problems that are going to be exacerbated when data is growing exponentially. Similarly, again we see issues with ‘variety of data types and structures’. The lack of support for different data types and structures then propagates downstream and inhibits ML/DS personas because there are issues with data. ML and DS are how these companies want to ‘create insights’, or create value for customers. The data warehouse paradigm already looks like it is not the single solution for modern big data.

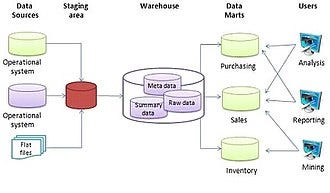

A warehouse architecture could look something like this:

In this particular implementation we have data marts. Data marts are essentially smaller data warehouses that are focused on a single department or business unit. Each mart would pull a subset of data from the warehouse particular to that department or business unit. Marts are typically faster to build out for that reason. Note that the warehouse is holding raw, summary, and meta data.

Due to the shortcomings of the data warehouse, the paradigm shifted.

The Data Lake Paradigm

The downsides of the data warehouse led to the creation of Data Lakes, especially those based in the Hadoop ecosystem. From the same Google whitepaper:

data lakes were developed as low-cost storage solutions that essentially amounted to distributed storage of files

One of the key differences with the data lake is that it is schema on read, which means the data was applied to a schema at read time.

Data lakes were supposed to be solve issues created by data warehouses, but most of the potential benefits did not materialize. From the same Google whitepaper, (emphasis mine):

[data lakes] looked great on paper by promising low cost and the ability to scale. In reality, these promises were not realized for many organizations. This was mainly because they were not easily operationalized, productionized, or utilized. To interact with data in the lake, an end user had to be fairly proficient in particular coding paradigms, which limited the set of people who could use the data. All of these in return increased the total cost of ownership. There were also significant data governance challenges created by the data lakes . They did not work well with the existing identity and access management (IAM) and security models. Furthermore, they ended up creating data silos because data was not easily shared across the Hadoop environment.

Data Lake Recap

The tradeoffs here came with an ecosystem that was harder to manage, difficult to integrate with IAM models or controls, and created data silos. These issues manifested through data and effort duplication, reduced collaboration, which resulted in overall slower time to market.

Limbo

At this point, many organizations used both the data warehouse and data lake in production. Unstructured raw data was stored in the data lake where data exploration occurred and the data warehouse was used for analytics and similar work. So now organizations had two independent data silos, with high costs, high overhead, and low collaboration.

Data Lake House

In recent years the solution modern organizations have come up with is the Lake House. You can think of it almost like a child of the warehouse and lake that takes the best from both. some of you might be thinking like WTF the solution seems like the aforementioned ‘Limbo’ stage. Not really, the implementation and culture is different. Let’s take a look at a principles from AWS on modern Lake House implementation.

from AWS Blog post:

As a modern data architecture, the Lake House approach is not just about integrating your data lake and your data warehouse, but it’s about connecting your data lake, your data warehouse, and all your other purpose-built services into a coherent whole. The data lake allows you to have a single place you can run analytics across most of your data while the purpose-built analytics services provide the speed you need for specific use cases like real-time dashboards and log analytics.

We are combatting limbo by breaking down the silos and turning the two into a ‘coherent whole’. We want to take the best from both and create a unified data system.

Principles

from AWS Blog post:

This Lake House approach consists of following key elements:

Scalable Data Lakes

Purpose-built Data Services

Seamless Data Movement

Unified Governance

Performant and Cost-effective

This looks very similar to the principles we look at earlier. The key here is that we are using the Data Lake as the core of the Lake House and then propagating data down stream to purpose-built data services.

Why Data Lake At The Core?

One of the principles of Modern Data Architecture is ‘decoupled storage and compute’. The modern Data Lake is generally implemented as an object store. Leveraging an object store as the Data Lake provides us cheap storage, and we only pay for storage. We are not paying for any compute that this point. The AWS implementation of a Data Lake House has a Lake based on the S3 which is their object store service, for Google Cloud (GCP) the Cloud Storage service. Both of these object store services are cheap, durable (meaning the provider does not ‘lose’ objects), and available (meaning the service is highly available and fault tolerant). For both AWS and GCP these object stores are easily scalable to the petabyte scale.

HDFS vs Object Store Data Lake

Recall Hadoop Data Lakes are also based on the HDFS or Hadoop Distributed File System. The key distinction here is that in the cloud, object store is leveraged vs HDFS. Generally, the total-cost of ownership is lower for object store based Data Lakes than HDFS/Hadoop based Data Lakes. The object store Data Lakes aimed to resolve the issues pointed out in the excerpt from the Google whitepaper referenced earlier. However, there still are some use-cases where HDFS makes sense to use either on-prem or in the cloud, more can be read here if interested.

Why are ‘Purpose-built Data Services’ needed?

This may be getting repetitive, but because of challenges around the growth of data sources, and unstructured data there is a need for ‘Purpose-build Data Services’. From AWS Whitepaper, (emphasis mine)

With the recent exponential growth of data coming from an ever-growing ecosystem of data stores, system logs, Software as a Service (SaaS) applications, machines, and Internet of Things (IoT) devices, a single type of system is not effective in meeting all business use cases.

This is where the big cloud providers like AWS and GCP have tightly coupled their object store services with ETL, Data Warehouse service(s), and machine learning services to facilitate data services and seamless data movement.

What does a Lake House look like?

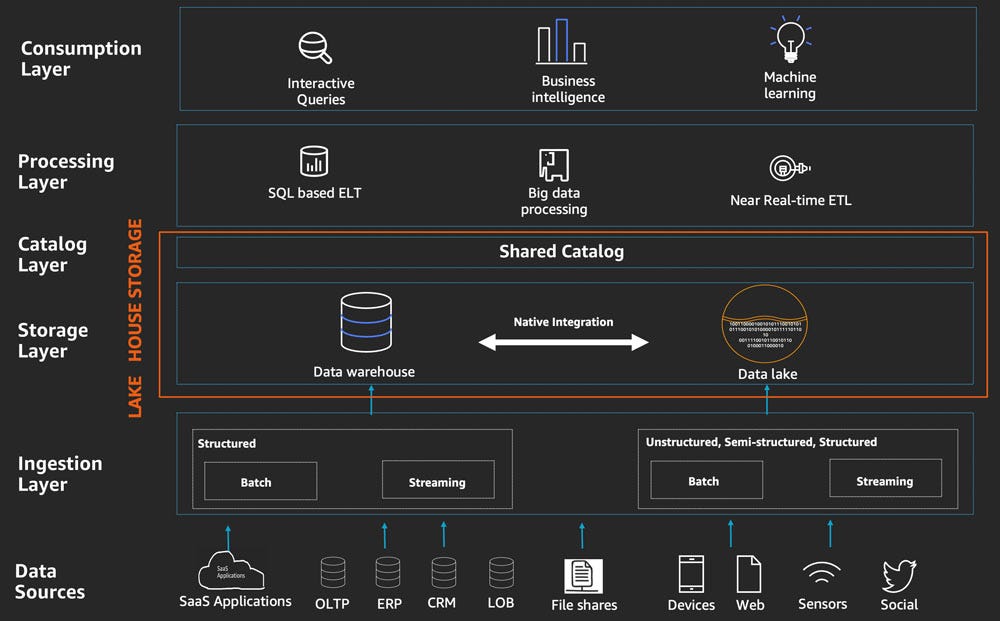

An implementation could look something like this:

Notice there are many data sources, assume many of which are in different data formats. In the ingestion layer the data is being either streamed (think real time) or written in batches (think not real time).

In the storage layer generally the Data Lake is used to house the raw, enriched, and/or other forms of data. For the same reasons discussed earlier, object store service is the better solution for this use case. Open file formats are the preferred format, Apache Parquet very common.

Catalog layer is shared by the Warehouse and Lake and would store metadata on the datasets.

Data processing layer is where things get more exciting, from AWS blog post:

Components in the data processing layer of the Lake House Architecture are responsible for transforming data into a consumable state through data validation, cleanup, normalization, transformation, and enrichment.

ELT jobs, MapReduce, whatever your org needs to do. Remember these processing jobs are purpose-built which is going to increase your speed to market. From the same post:

The processing layer can access the unified Lake House storage interfaces and common catalog, thereby accessing all the data and metadata in the Lake House. This has the following benefits:

Avoids data redundancies, unnecessary data movement, and duplication of ETL code that may result when dealing with a data lake and data warehouse separately

Reduces time to market

The key here is that the Lake and Warehouse are not siloed, they are being used together. In the Limbo stage work is duplicated, there could be issues with multiple datasets in different stages, etc. Eliminating those issues is going to result in faster iteration and development ultimately leading to the faster time to market.

Data consumption layer is where our data scientists would typically work. Traditional things like querying, data visualization, machine learning, all the good stuff. Different personas will have different needs and requirements, so the goal is to have the data delivered how the persona needs it.

Conclusion

We started with the desired modern data architecture principles and worked through the paradigms until we landed in a Data Lake House architecture. There are a couple good links in here for additional reading. Hopefully this provides some insight into some of the decisions around data engineering.

-Celt

Thanks for Reading this

You can find them here on Twitter: @BT_Celt

You can find more of his work here: BT_Celt.substack

wow this rocks!