MLOps 7: Packaging Your ML Models

Virtual Environments, Requirements.txt, Serializing & De-Serializing ML Models, Testing Your Code

A popular question for MLE roles is to ask candidates what they know about pickle and joblib. The main thing they are looking for is the “Why”. Do you know why most MLEs have swapped to joblib over pickle?

It’s basically the same principle as when you are going for a Data Analyst role for R programming. The first question they ask you is if you know why people use data.table, instead of data.frame. If you mess this up, you are no longer in the top bucket.

Table of Contents

Virtual Environments

Requirements.txt

Serializing and De-Serializing ML Models

Testing Your Code

1 - Virtual Environments

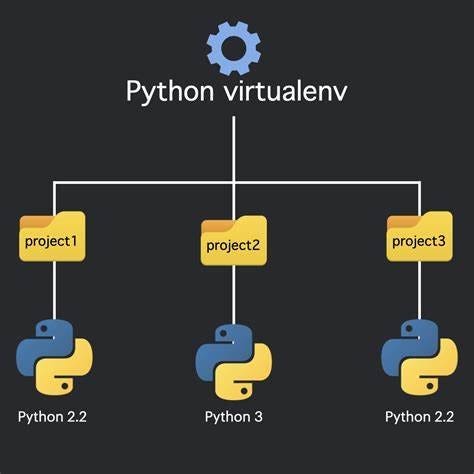

MLOps, or Machine Learning Operations, signifies the process of taking machine learning models from development to production. A significant aspect of MLOps is ensuring that models run consistently. This includes from development to testing, and production. This is where the concept of a virtual environment in Python becomes invaluable. In this section, we will delve deep into virtual environments. We also look at their significance, and how to use them.

1.1 What is a Virtual Environment (in Python)?

A virtual environment is a self-contained directory tree. It contains a Python interpreter and many installed packages. It's generally an isolated environment where you can run Python scripts. It ensures that dependencies required by different projects are separate.

Imagine you have two projects: Project A needs version 1.0 of a library, but Project B requires version 2.0. Without a virtual environment, managing these dependencies can become a nightmare. With a virtual environment, each project can live in its own environment. Each environment has its specific dependencies. This ensures that there are no clashes or incompatibilities.

1.2 Why Do We Use Virtual Environments?

Isolation: As mentioned, it allows multiple projects with differing requirements to coexist without conflict.

Consistency: Especially crucial in MLOps, where a model may depend on specific package versions to function correctly. Ensuring consistency between development, testing, and production environments reduces unexpected behaviors.

Version Control: Virtual environments enable the use of specific package versions, allowing developers to test new versions without affecting the current setup.

1.3 Setting Up a Virtual Environment

Installing a virtual Environment

Python 3 comes with the venv module, making it easy to create lightweight virtual environments. If you're using Python 2, you'll need the virtualenv tool.

Here’s how you can install it:

pip install virtualenvHow to Create a Virtual Environment

Depending on your Python version, you can create a virtual environment using one of the following methods:

For Python 3:

python3 -m venv /path/to/new/virtual/environmentFor Python 2:

virtualenv /path/to/new/virtual/environment

How to Activate a Virtual Environment

Once a virtual environment is created, it needs to be activated:

On macOS and Linux:

source /path-to-new-virtual-environment/bin/activateOn Windows:

C:\> \path-to-new-virtual-environment\Scripts\activateUpon activation, your shell's prompt will change, usually showing the name of the activated environment, indicating that you're now working within the virtual environment.

2 - Requirements.txt

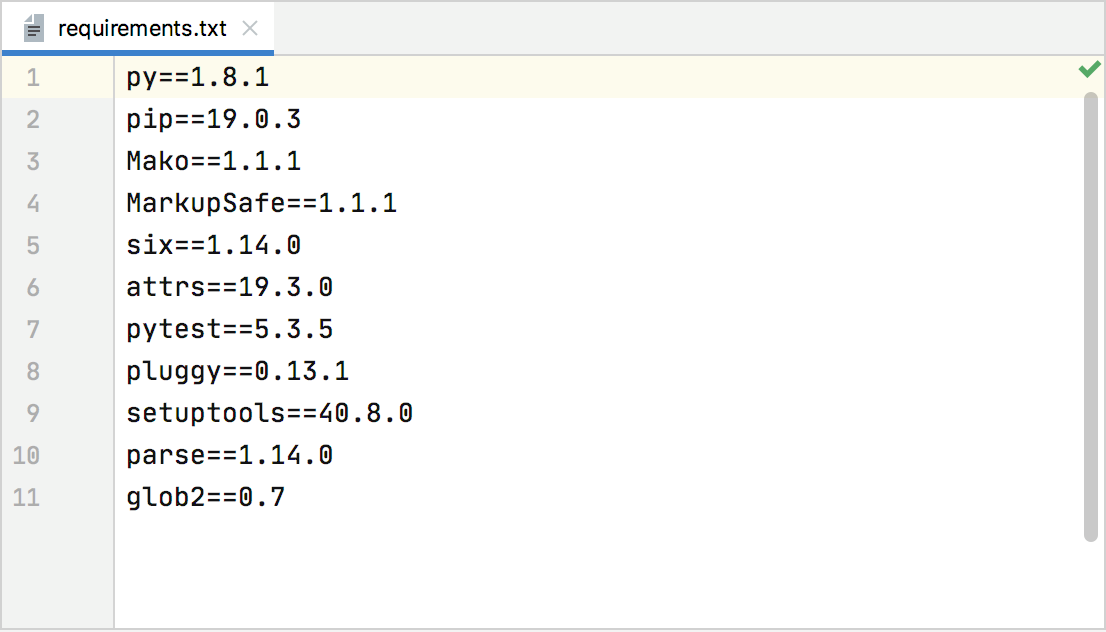

MLOps is all about applying robust software engineering practices to machine learning. The main purpose is to automate the end-to-end machine learning lifecycle. This ensures consistency across different environments becomes pivotal. The requirements.txt file plays an instrumental role in this. This is often in the context of data profiling for machine learning in production.

2.1 Requirements.txt File

The requirements.txt file is a standard used in Python projects. It lists all the project's dependencies, along with their specific versions. This ensures the alignment of all collaborators or systems working on the project. It aligns with the exact set of packages and versions. This leads to consistent behavior across different setups.

In the context of MLOps, this file gains even more significance:

Reproducibility: One of the fundamental principles of MLOps is reproducibility. Whether it's the developer's local machine, a test environment, or a production server, the model should exhibit the same behavior. The

requirements.txtfile ensures that the exact environment can be replicated.Data Profiling: When putting machine learning models into production, the profiling of data often requires specific tools or libraries. Ensuring the right version of these tools is in place is essential for accurate and consistent profiling.

2.2 Install All of the Packages in the requirements.txt File

To install all the dependencies listed in the requirements.txt file, navigate to the directory containing the file and run the following command:

pip install -r requirements.txtThis instructs pip (Python’s package installer) to fetch and install all the packages with their respective versions as mentioned in the file. Automating this step ensures that every environment setup, from development to production, is seamless and consistent.

2.3 How to Create Your Own requirements.txt File?

If you've been working on a project and wish to generate a requirements.txt file based on the libraries you've used, it's a straightforward process. Here’s how you can create one:

First, it's advisable (though not mandatory) to work within a virtual environment (as discussed in the previous section) to ensure you're only capturing the dependencies relevant to your project.

Once you're in your project’s environment, run:

pip freeze > requirements.txtThe pip freeze command lists all the installed packages in the current environment, and the > operator writes this list to the requirements.txt file.

Remember, while this method captures all dependencies, it might be wise to manually review the generated file to ensure it only includes necessary packages, especially if you've installed additional tools for testing or debugging.

3 - Serializing & De-Serializing ML Models

One of the cornerstones of putting Machine Learning models into production (a key part of MLOps) is the ability to serialize and de-serialize models. As data is profiled, features are engineered, and models are trained,