Python Data Skills 13: Tidying Your Data

Removing duplicated rows, Fixing many-to-many relationships, Stack & Melt, Unstack & Pivot

In the realm of data science & ML, having the right data is only the starting point. That structure of data is often determined by the ease and effectiveness. This helps with analyzation. Tidying and reshaping data are crucial preprocessing steps. These steps prepare your dataset for optimal exploration and analysis.

Everything below is about how to tidy up your data.

Table of Contents

Removing duplicated rows

Fixing many-to-many relationships

Stack & Melt

Unstack & Pivot

1 - Removing duplicated Rows

When working with large datasets, it's not uncommon to encounter duplicate rows. These duplications can introduce noise, skew analyses, and generate misleading results. When tidying and reshaping data, removing these duplicates is an essential step. This step ensures data quality.

1.1 Where the duplicated data might have originated from

Duplicate rows can sneak into your datasets for several reasons:

Data Collection Redundancies: Data gathered from multiple sources or through repeated data collection processes can lead to overlaps.

Data Merging: When combining datasets, if there aren’t unique identifiers or if there are inconsistencies in the merging criteria, duplicates can arise.

Human Errors: Duplications can occur when mistakenly copying and pasting rows. Duplications can also occur when resubmitting entries in data input processes.

System Glitches: Sometimes, technical malfunctions or software bugs can introduce duplicates. This happens during data storage or transfer.

Note: Audit your data collection on a regular basis merging, and storage processes. This minimizes the introduction of duplicates.

1.2 Why you *should* eliminate duplicated data

Duplicate data can distort true insights. For instance:

Statistical Measures: Averages, medians, and other statistical measures can be biased if duplicates artificially inflate certain values.

Model Training: Machine learning models can be misled by duplicates, leading to overfitting and reduced generalization.

Wasted Resources: Duplicates increase dataset size. This can slow down analyses and waste computational resources.

Note: Always include a deduplication step in your data preprocessing pipeline. This is especially necessary before conducting statistical analyses or training machine learning models.

1.3 drop_duplicates

The Pandas library in Python offers a simple yet powerful method, drop_duplicates(). Use it to get rid of duplicate rows.

# Remove all duplicate rows

cleaned_df = df.drop_duplicates()

# Remove duplicates based on specific columns

cleaned_df = df.drop_duplicates(subset=['column1', 'column2'])Note: While drop_duplicates() is straightforward, remember to carefully choose the subset of columns on which you want to base the deduplication, especially if your dataset has many features.

1.4 Potential Pitfalls

Order Matters: The drop_duplicates() method keeps the first occurrence and drops subsequent duplicates. If your data has a temporal dimension, ensure it's sorted correctly before deduplication

Nearly Duplicates: Sometimes, rows might be very similar but not exact duplicates due to minor discrepancies. Consider techniques like fuzzy matching to identify and handle these.

Data Verification: After deduplication, cross-check the cleaned data against original sources or known metrics to ensure no critical data has been inadvertently removed.

Note: Deduplication isn't a mindless elimination process. Approach it with care. Ensure that you aren’t removing valuable variations in your dataset. Consider employing checksums or hash functions to verify data integrity post-deduplication.

2 - Fixing many-to-many relationships

In the realm of relational databases and data modeling, relationships between entities play a pivotal role.

One-to-one and one-to-many relationships are straightforward and common. But many-to-many relationships pose unique challenges. When tidying and reshaping data, addressing these challenges becomes crucial. This is crucial towards maintaining data coherence and ensuring effective analysis.

2.1 Understanding many-to-many relationships

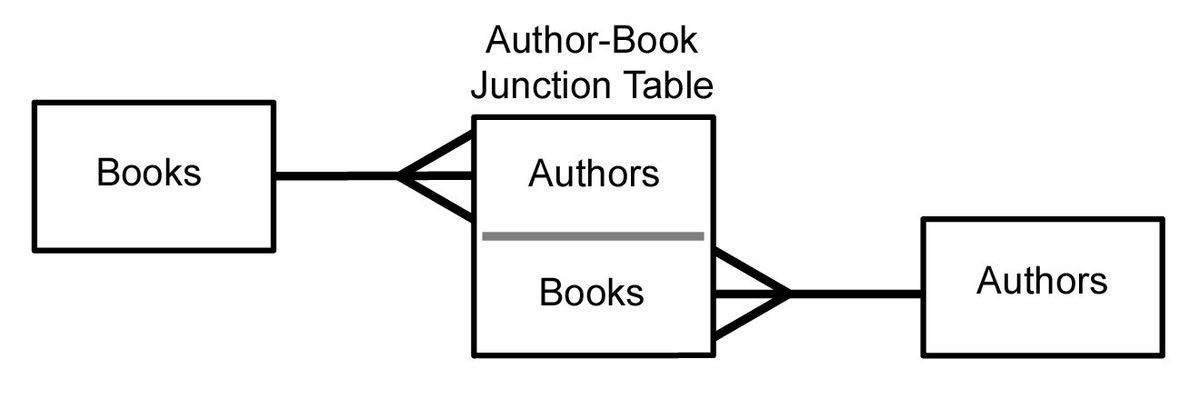

A many-to-many relationship exists when multiple records in one table are associated with multiple records in another table. For instance, consider a database that tracks authors and books. An author can write multiple books, and a book can have multiple authors. This interrelation constitutes a many-to-many relationship.

Note: When designing or analyzing a database schema, create Entity Relationship Diagrams (ERDs). These diagrams provide a visual representation of the relationships. They can help you identify any many-to-many relationships.

2.2 Problems Posed by many-to-many relationships

At a glance, many-to-many relationships might seem to offer flexibility, but they introduce ambiguity and complications:

Ambiguity and Redundancy: These relationships can lead to data redundancy. Data redundancy makes it unclear how records relate to each other. This complicates data retrieval and reporting.

Performance Issues: Databases with many-to-many relationships often face performance issues. This is because queries become complex, slowing down data retrieval.

Update Anomalies: This leads to data inconsistencies. Changes in one table may inadvertently affect multiple records in another table.

Note: Periodically review the relationships in your database. Finding patterns where a lot of complex queries needed to retrieve related data. This might be a sign of problematic many-to-many relationships.

2.3 Resolving many-to-many relationships

The best way to manage many-to-many relationships is to split them. Split them into two one-to-many relationships. Use an intermediary table (often called a "junction" or "bridge" table). Let’s pretend you have 2 tables: Authors & Books.

Here is how you’d resolve it:

Create a new table: AuthorBook.

The AuthorBook table will have two primary keys: AuthorID and BookID. These will be foreign keys linking to the Authors and Books tables, respectively.

Every time an author writes a book, an entry is made in the AuthorBook table, establishing the relationship.

# Sample structure in pandas

authors_df = pd.DataFrame({'AuthorID': [1, 2], 'AuthorName': ['John', 'Jane']})

books_df = pd.DataFrame({'BookID': [1, 2], 'BookTitle': ['Book A', 'Book B']})

author_book_df = pd.DataFrame({'AuthorID': [1, 2, 1], 'BookID': [1, 1, 2]})Note: When splitting many-to-many relationships, take a look at the intermediary table. Ensure that is has a composite primary key composed of the foreign keys. This structure ensures unique combinations and helps maintain data integrity.

3 - Stack & Melt

Data storage format can be highly affect the ease of analysis. This is in the evolving data science landscape. Two of the most common formats are the "wide" and "long" formats. Each format has its merits. But there are times when reshaping data between these two becomes crucial. It is crucial for efficient analysis.

Enter stack and melt - two powerful tools in the pandas library. The design is specifically for this purpose.

3.1 Wide Format

A wide format table, as its name suggests, spreads data across multiple columns. Here, each subject or entity has a single row with multiple columns. The columns are representing various attributes or time points. It is the typical format most people are familiar with when they think of spreadsheets.

Example:

Note: While wide format is visually comprehensive, it can be cumbersome when trying to perform operations across different attributes or time points.

3.2 Long Format

Contrary to wide format, a long format table (also called "tidy" format) has one column for the entities and another for the attributes or time points. Each row represents a single observation.

Example:

Note: Long format simplifies operations across attributes or time points and is more conducive for certain types of data visualization.

3.3 Convert wide to long via stack

The stack method in pandas compresses a level in the DataFrame's columns. This produces a longer format.

import pandas as pd

# Sample wide format data

df_wide = pd.DataFrame({'Math': [90, 78], 'Science': [85, 92]}, index=['Alice', 'Bob'])

# Using stack to convert to long format

df_long = df_wide.stack().reset_index()

df_long.columns = ['Student', 'Subject', 'Score']Note: The stack method works best when you have a multi-index DataFrame. It also works best for when you want to reshape the entire DataFrame. It's efficient and concise for wide-to-long transformations.

3.4 Convert wide to long via melt

The melt function is more flexible than stack and can reshape specific columns

# Using melt to convert to long format

df_long = pd.melt(df_wide.reset_index(), id_vars='index', value_vars=['Math', 'Science'])

df_long.columns = ['Student', 'Subject', 'Score']Note: When you want to reshape only specific columns. It can also be if you need more control over the reshaping process, melt is the tool of choice.

4 - Unstack & Pivot

The journey of data transformation is an essential step in analyzing your data. As you have learned earlier, converting data from wide to long format helps. It aids in certain types of operations and visualizations. There are occasions where transitioning back to wide format becomes necessary. It can be just as you might need to move to a long format. Two of the primary tools to achieve this transition in the pandas library are unstack and pivot.

4.1 Convert long to wide via unstack

The unstack method in pandas convert multi-index series into a DataFrame. It changes the data from a long format to wide.

Example: Imagine you have a long format DataFrame like:

import pandas as pd

# Sample data in long format

df_long = pd.DataFrame({

'Student': ['Alice', 'Alice', 'Bob', 'Bob'],

'Subject': ['Math', 'Science', 'Math', 'Science'],

'Score': [90, 85, 78, 92]

})

# Using unstack to convert to wide format

df_wide = df_long.set_index(['Student', 'Subject']).unstack()

df_wide.columns = df_wide.columns.droplevel(0)Note: Unstack works very well when the data is already indexed the right way. This making the transformation to wide format almost seamless. It’s a preferred method when you’re working with multi-index DataFrames.

4.2 Convert long to wide via pivot

The pivot method offers a more direct and intuitive approach. It provides clarity in its syntax. Specifying the columns for the index, columns, and values of the resulting DataFrame.

Using the same sample data:

# Using pivot to reshape data

df_wide = df_long.pivot(index='Student', columns='Subject', values='Score').reset_index()Note: For those new to reshaping data, pivot can be a more user-friendly introduction due to its explicit nature. It clearly defines how the new table will look and works best when reshaping complete DataFrames.

4.3 Unstack or Pivot? Which one should you use?

Both unstack and pivot achieve the same end goal. Yet, their suitability varies based on the situation:

Complexity & Indexing: If your data is already multi-indexed or has a complex hierarchical structure,

unstackbecomes the natural choice. It is designed to handle such data structures with ease.Clarity & Control: For straightforward reshaping where you want greater clarity and control over the transformation,

pivotis the go-to. Its syntax clearly defines what the resulting wide format will look like.

Note: While both methods have their strengths, consider the structure of your data. Also consider your comfort level with each method. You will develop a preference based on the specific needs of your datasets. This will happen as you become more experienced.