Python Data Skills 7: Anomalies - Part 2

Linear Regression, K-Nearest Neighbors, Isolation Forest, and When you *SHOULD NOT* use ML

Last post was about the basics of detecting outliers using data manipulation & visualization.

This post is about using Machine Learning to spot outliers within your dataset.

Another name for this technique is the “Statistical Modelling based approach”. Some people use this specific terminology during interviews. Make sure you remember this phrase, as you don’t want to get caught off guard.

Table of Contents:

Linear Regression

K-Nearest Neighbors

Isolation Forest

When you *SHOULD NOT* use ML

1 - Linear Regression

1.1 Linear Regression

Linear Regression. This is one of the most fundamental algorithms in machine learning and statistics. It attempts to show the relationship between two variables. It does this by fitting a linear equation to the observed data. The steps to conduct a linear regression are:

Select a dependent variable which you're trying to predict or explain.

Choose independent variables which you'll use to predict the dependent variable.

Collect data on these variables.

Use this data to train a model. This model will predict the dependent variable from the independent variables.

from sklearn.linear_model import LinearRegression

# Assuming 'target' is your dependent variable and 'features' are your independent variables

model = LinearRegression()

model.fit(df[features], df[target])I have an entire post dedicated to linear regression here.

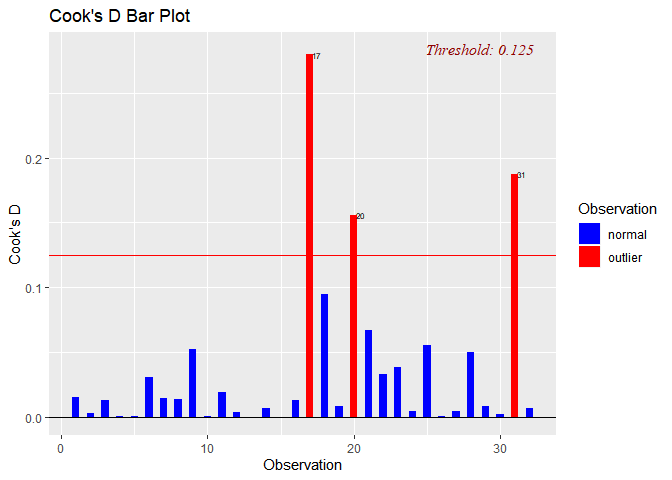

1.2 Cook’s Distance

Cook's distance is a measure that provides a composite measure of influence. It does this for each observation on the model's predictions for all observations. It considers both the leverage and the residual of each observation. Observations with high Cook's distance might be outliers. It could also be influential points that need examination.

Here is a code snippet:

import statsmodels.api as sm

from statsmodels.stats.outliers_influence import OLSInfluence

model = sm.OLS(df[target], sm.add_constant(df[features]))

fitted_model = model.fit()

influence = OLSInfluence(fitted_model)

cooks_distance = influence.cooks_distance[0]1.3 Influence Plot

An influence plot is a scatter plot. It shows the standardized residuals and the Cook's distance. It also shows the leverage of each observation in the dataset. It can help in visualizing the impact of each data point on the regression line. It also helps with identifying potential outliers.

from statsmodels.graphics.regressionplots import influence_plot

influence_plot(fitted_model)

plt.show()1.4 Significance (p-value)

A p-value is a statistical measure. It helps us to determine the significance of the results from our hypothesis test. It helps to decide whether to reject or fail to reject the null hypothesis. You can also use it in regression analysis. It can test whether a particular independent variable is significant or not. A lower p-value indicates that the independent variable is significant.

Here is how you can get the p-values displayed:

# Summary of the fitted model

print(fitted_model.summary())1.5 Pros & Cons of this Approach

Using linear regression for outlier detection has its benefits and potential drawbacks. This is like any statistical approach.

Pros:

Comprehensive: The approach checks for outliers. It checks in both the dependent variable and the independent variables. This is because Cook's Distance measures the effect of deleting a given observation. This means it assesses the total influence of each point on the linear regression.

Visual and Quantitative Assessment: An influence plot allows for visual outlier detection. The Cook's Distance provides a numerical measure of an observation's influence. This can be helpful for determining a cut-off point for what you might consider an outlier.

Addresses Multivariate Outliers: It works well for multivariate data. (i.e., when you have many independent variables). It can help identify observations that are unusual combinations of predictor values.

Significance Testing: By evaluating p-values, you can also assess which variables significantly contribute to your regression model.

Cons:

Assumes Linear Relationship: The effectiveness of outlier detection using this method assumes a linear relationship between the variables. If the true relationship is not linear, the regression model may incorrectly identify outliers.

Sensitive to Leverage Points: Leverage points can have a large impact on the model. Thus, might inflate the Cook's distance. This could lead to flagging non-outliers as outliers.

Potentially Computationally Intensive: For very large datasets. Fitting a regression model and calculating influence measures can be intensive.

Requires Domain Knowledge: Requires understanding the context and domain. Determining whether an influential point is an outlier or a valuable piece of data.

2 - KNearest Neighbors (KNN)

2.1 Intro to KNN

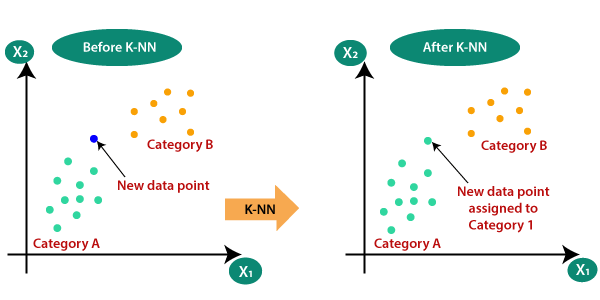

K-Nearest Neighbors (KNN) is a versatile machine learning algorithm. It has found application in a variety of tasks. This includes both classification and regression. At its core, the KNN algorithm operates by examining the 'k' most similar instances (neighbors) to a given data point to determine its outcome or, in the case of outlier detection, its normality.

The KNN algorithm views 'normal' as being like many points. It views 'abnormal' as being dissimilar to most points. So, data points that don't have many neighbors in the dataset, ie. they're far away from most points, they are outliers. We can generate an outlier score, which we use to determine if a point is an outlier or not. We do this by inspecting the distance of a point to its nearest neighbors.

2.2 PyOD & Sklearn

For implementing the KNN outlier detection in Python, two powerful libraries are Python Outlier Detection (PyOD) and Scikit-learn (sklearn). PyOD is a comprehensive toolkit for detecting outlying objects in multivariate data, and it's specifically developed for outlier detection. It contains more than 20 algorithms, including classical outlier detection models like KNN, and state-of-the-art, neural-network-based models.

Sklearn is a comprehensive library for machine learning in Python. It offers a broad suite of high-level tools for processing data and evaluating models. It also offers pre-processing input data. Sklearn's versatile capabilities make it a one-stop-shop for machine learning practitioners.

from pyod.models.knn import KNN # pyOD's KNN module

from sklearn.preprocessing import StandardScaler # StandardScaler from sklearn2.3 Anomaly Scores

Anomaly scores are a critical component in the process of outlier detection. They serve as a measurement or score that quantifies the degree of abnormality of a data point. In simpler terms, an anomaly score indicates how much a data point deviates from what we might expect given the general characteristics of the dataset. High anomaly scores are assigned to data points that deviate significantly from the norm, thus possibly being outliers, while more 'normal' or expected data points are given lower scores.

These scores are usually based on the distance of a data point from its nearest neighbors. For each data point, the algorithm finds its 'k' nearest neighbors. It then calculates the distances to these neighbors. These distances are then aggregated (often by taking the average or the largest). It then yields the anomaly score for that particular data point.

'Normal' data points are likely to have other 'normal' points close to them. leading to a small distance and thus a low anomaly score. In contrast, outliers are less likely to have other points nearby. They will have a greater distance to their nearest neighbors. This will result in a high anomaly score.

These anomaly scores then become the basis for classifying a data point as an outlier or not. A common approach is to set a threshold. You then have to classify all data points with an anomaly score above this threshold as outliers. The choice of this threshold depends on the specific context. It also depends on and the proportion of outliers we expect in the data.

2.4 Implementation

With PyOD and sklearn, it becomes straightforward to execute KNN outlier detection. Here is an illustrative example:

scaler = StandardScaler()

df_scaled = scaler.fit_transform(df) # Scale the dataset

# Training the KNN model

clf = KNN(contamination=0.02, n_neighbors=5) # We expect 2% of the data points to be outliers

clf.fit(df_scaled) # Fit the model to the scaled dataset

# Retrieving the predictions made by the model

y_train_pred = clf.labels_

# Calculating the anomaly scores

y_train_scores = clf.decision_scores_In the above code, we first scale our dataset using sklearn's StandardScaler. We do this to ensure all features are on a similar scale. This is a crucial pre-processing step for distance-based algorithms like KNN. We then initialize our KNN model and fit it to our scaled data. The model then labels the data points. Finally, we retrieve these labels and also calculate the anomaly scores.

2.5 Limitations of this technique

While KNN is a flexible and powerful tool for outlier detection, it comes with a few limitations. The main drawback is its inefficiency when dealing with large datasets. KNN is a distance-based algorithm. This means it computes the distance between each pair of data points. So, as the number of data points increases, the computational cost grows. This makes the algorithm slower.

Furthermore, the KNN algorithm's effectiveness relies on the correct choice of 'k'. This is the number of neighbors considered. An inappropriate 'k' value can lead to poor outlier detection performance. Automatic selection of 'k' is a research area in its own right.

3 - Isolation Forests

3.1 Introduction to Isolation Forests

Isolation Forest is a machine learning algorithm that's used for anomaly detection. It's an unsupervised learning algorithm that identifies anomalies or outliers. This is within a dataset based on the principle of isolating anomalies.

An Isolation Forest 'isolates' observations. It does this by selecting a feature at random. It then selects a split value. This value is between the largest and smallest values of the selected feature. This is also at random. The logic argument goes: isolating anomaly observations is easier. This is because we only need a few conditions to separate cases that are very different. Hence, when a forest of random trees produces shorter paths for particular samples. They are very likely to be anomalies.

3.2 Inliers vs. Outliers

"Inliers" are the normal data points, while "outliers" are the anomalies. This is in the context of the Isolation Forest. The anomaly score helps in distinguishing between these inliers and outliers. When anomaly scores are close to 1, it suggests the data point is definitely an outlier. When they are around 0.5, it indicates that the point is an inlier. Anomaly scores between these two values suggest a transitional zone.

3.3 Application of Isolation Forests

In Python, the scikit-learn library provides an implementation of the Isolation Forest algorithm. Here's a simple way to use it:

from sklearn.ensemble import IsolationForest

# Assuming df is your DataFrame

clf = IsolationForest(random_state=0)

clf.fit(df)

# The anomaly score is computed with decision_function

# Negative scores are outliers

scores = clf.decision_function(df)I’ve attached a screenshot of the documentation above. You can go directly to the link for isolation forests here.

3.4 Limitations

While Isolation Forests are a powerful method for detecting outliers, they do have their limitations:

The algorithm assumes independence of features. In this case, it may not perform well.

Forests may not perform as well on high-dimensional data. This is because the 'path length' becomes less meaningful.

The results of Isolation Forests can be sensitive to the number of trees in the forest. There isn't a foolproof way of determining the best number.

Now that we’ve covered some ways to automatically detect outliers within your dataset, let’s talk about some reasons for why you wouldn’t want to do it.

4 - When you SHOULD NOT use ML

4.1 Transparency and Interpretability

Traditional techniques offer transparency and ease. It helps with interpretation that's often lacking in machine learning models. For example, a box plot or a scatter plot. They can immediately convey which data points are outliers. This is not always the case with more complex models. Example are Isolation Forests or K-Nearest Neighbors. The decision process can be more opaque.

This transparency is extremely important for certain industries which have huge amounts of regulation. Good luck convincing certain large hedge funds that you are going to be using ML to clean your data, and then use ML to model it.

4.2 Machine Learning Overhead

Machine learning models can sometimes be overkill for simple tasks. Training a complex model can be expensive and time-consuming. Especially when dealing with large datasets. Additionally, machine learning algorithms need a certain level of expertise. They have hyperparameters that need tuning and assumptions that need validating. They also need a large amount of data to perform well.

4.3 Robustness to Small Datasets (Overfitting)

Traditional techniques are generally more robust to small datasets. Machine learning algorithms need large amounts of data. Especially the more complex ones, to train and provide reliable results. When working with small datasets, simpler techniques can often yield better results.