MLOps 16: Deployment on AWS

Intro to Amazon Web Services, Getting Started with AWS, Deployment in AWS, How to sell this project (to a hiring manager)

***This post is too long for email, please visit the webpage to read this fully***

AWS is another tool that you can ask to run deployment on. As usual, can go ahead, and read this post if you think you’ll be dealing with AWS, if not, no real reason to read it.

Thanks to

for helping out with this post.FYI the deployment for this one is huuuuuuge.

My man BowTiedRaptor keeps blessing me with opportunities to write for you all. Long time readers remember me from a couple posts:

https://bowtiedraptor.substack.com/p/but-how-do-we-get-our-data

https://bowtiedraptor.substack.com/p/3-perspectives-on-how-to-comment

https://bowtiedraptor.substack.com/p/becoming-bonjwa-in-the-data-industry

These posts are pretty fire, just saying. Anyway, thanks to raptor im here today to tell you about how to deploy a model as an API in AWS. This is very common work in MLOps or even DevOps.

Table of Contents

Intro to Amazon Web Services

Getting started with AWS

Deployment in AWS (this is long)

How to sell this project (to a hiring manager)

1 - Intro to Amazon Web Services

Amazon Web Services (AWS) is a comprehensive and widely adopted cloud platform. It offers over 200 fully-featured services from data centers globally. In the domain of Machine Learning Operations (MLOps), AWS provides a suite of services. They can be instrumental in deploying, managing, and scaling ML models. Let's explore the key AWS services that are particularly relevant for MLOps

Amazon Web Services (AWS)

AWS is a subsidiary of Amazon. It provides on-demand cloud computing platforms and APIs to individuals, companies, and governments. It offers a broad set of global cloud-based products. This includes compute, storage, databases, analytics, networking, mobile, developer tools, management tools, IoT, security, and enterprise applications.

Amazon Elastic Compute Cloud (EC2)

EC2 provides scalable computing capacity in the AWS cloud. It reduces the time required to obtain and boot new server instances. This allows you to quickly scale capacity, both up and down, as your computing requirements change.

EC2 can host machine learning models. This allows you to run large-scale model training and inference workloads with ease.

Amazon Elastic Container Service (ECS)

ECS is a fully managed container orchestration service. It makes it easy to deploy, manage, and scale containerized applications.

ECS is ideal for deploying ML models packaged in Docker containers. It handles the complexity of managing container orchestration.

Amazon Elastic Kubernetes Service (EKS)

EKS fully manages Kubernetes on AWS. It automates key tasks such as patching, node provisioning, and updates.

EKS is suitable for ML workloads that benefit from Kubernetes. This provides an optimized environment for deploying, scaling, and managing ML models.

Note: EKS is more common in the industry because of Kubernetes (often called k8s). Kubernetes is one of the most popular open source projects in the world. It’s a container orchestration engine, and ubiquitous in tech. Getting some experience with EKS is a plus. If you have some extra time redoing this lab in EKS can be beneficial.

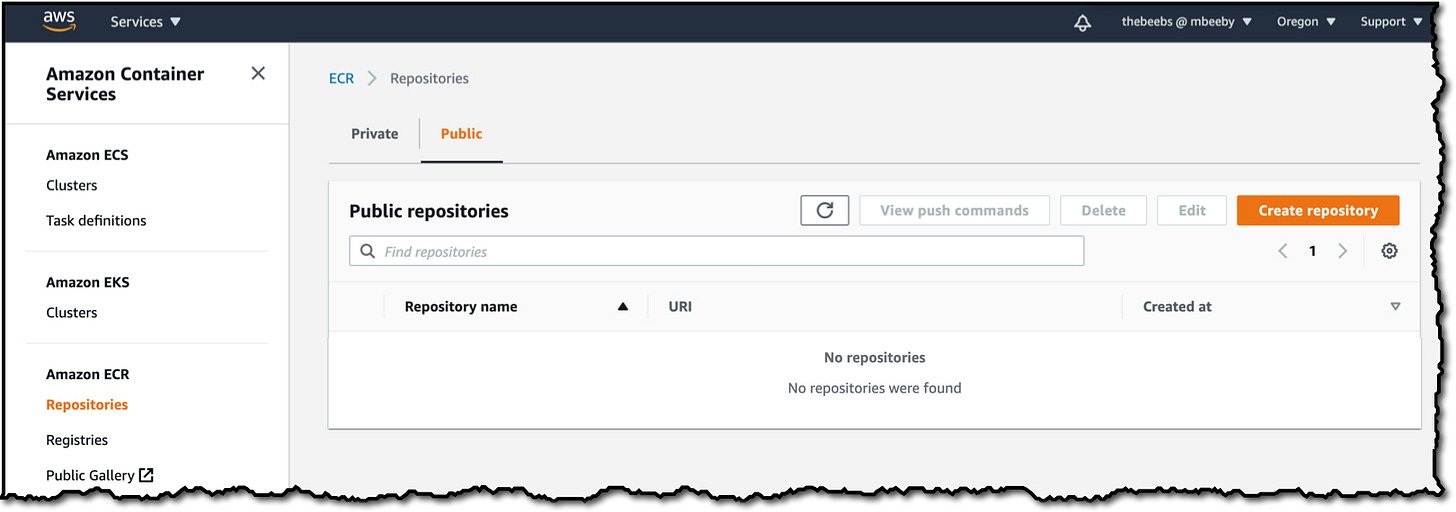

Amazon Elastic Container Registry (ECR)

ECR is a fully managed Docker container registry. It makes it easy for developers to store, manage, and deploy Docker container images.

ECR can be used to store ML model containers securely and integrate them seamlessly with ECS and EKS deployments.

Amazon Fargate

Fargate is a serverless compute engine for containers that works with both ECS and EKS. It removes the need to provision and manage servers.

Fargate simplifies the deployment of containerized ML models. It does this by removing the need to manage the underlying infrastructure.

Amazon Lambda

Lambda is a serverless compute service. It lets you run code without provisioning or managing servers. It executes your code only when needed and scales automatically.

Lambda can be used for lightweight ML inference, running code in response to events, such as changes in data in an Amazon S3 bucket.

Amazon SageMaker

SageMaker is a fully managed service. It provides every developer and data scientist with the ability to build, train, and deploy machine learning models quickly.

SageMaker streamlines the entire ML workflow, from data preparation and analysis to training and deployment. This makes it a cornerstone service for MLOps on AWS.

2 - Getting Started with AWS

Deploying machine learning models on Amazon Web Services (AWS) requires initial setup steps. This is to ensure secure and efficient management of resources. Here’s a guide to help you get started with AWS.

2.1 Setting up an AWS account

Sign Up:

Visit the AWS homepage.

Click on “Create an AWS Account.”

Follow the sign-up process, including providing your email address, password, and AWS account name.

Billing Information:

During the setup, you'll be prompted to enter billing information. AWS offers a Free Tier for new accounts, but you’ll need to provide a credit card.

Identity Verification:

Verify your identity via phone or SMS. AWS requires this step to protect your account.

2.2 Creating an IAM user

Access the IAM Console:

After logging into your AWS Account, navigate to the IAM (Identity and Access Management) Console.

Create a New User:

Choose “Users” from the navigation pane and click “Add User.”

Enter a username and select “Programmatic access” to enable an access key ID and secret access key for the AWS API, CLI, SDK, and other development tools.

Set Permissions:

Attach policies directly or add the user to a group with the necessary permissions.

For MLOps, consider permissions for services like EC2, S3, SageMaker, ECS, and EKS.

Review and Create:

Review the settings and create the user.

Important: Download or securely store the Access Key ID and Secret Access Key shown, as they are required for AWS CLI and SDKs.

2.3 Install and Configure the AWS CLI

Download and Install:

Download the AWS CLI from the AWS CLI page.

Follow the installation instructions for your operating system.

Configure the CLI:

Open a terminal or command prompt.

Run

aws configure.When prompted, enter the IAM user’s Access Key ID, Secret Access Key, default region name, and default output format.

2.4 Getting Started with AWS CodeCommit

AWS CodeCommit is a source control service that hosts Git-based repositories, making it ideal for collaborative ML projects.

Create a CodeCommit Repository:

In the AWS Management Console, go to AWS CodeCommit.

Choose “Create repository” and provide a name and description.

Set Up Access:

To access the repository, set up Git credentials in the IAM console for your IAM user.

Configure the local machine with these credentials to push and pull code.

Clone and Use the Repository:

Clone the repository to your local machine using the Git command:

git clone https://git-codecommit.[region].amazonaws.com/v1/repos/[repository-name]

You can now use this repository for your ML project, leveraging AWS’s secure and managed source control.

3 - Deployment in AWS

This one’s a long one.

Continuous Training and Prediction Setup

First, let's set up the essential components for our ML application:

main.py(FastAPI Application):from fastapi import FastAPI from pydantic import BaseModel app = FastAPI() class PredictionInput(BaseModel): feature1: float feature2: float @app.post("/predict") def make_prediction(input_data: PredictionInput): # Logic for prediction goes here return {"prediction": "result"}start.sh(Start Script):#!/bin/bash uvicorn main:app --host 0.0.0.0 --port 80

train_pipeline.py(the script for training your model):import pandas as pd from sklearn.model_selection import train_test_split from sklearn.ensemble import RandomForestClassifier from sklearn.metrics import accuracy_score import joblib def load_data(): # Replace this with the path to your dataset data = pd.read_csv('path/to/your/dataset.csv') return data def preprocess_data(data): # Basic preprocessing steps like handling missing values, feature encoding, etc. # This is a placeholder function, modify as per your dataset's requirements processed_data = data.fillna(method='ffill') return processed_data def train_model(X_train, y_train): # Initialize a random forest classifier model = RandomForestClassifier(n_estimators=100, random_state=42) model.fit(X_train, y_train) return model def evaluate_model(model, X_test, y_test): predictions = model.predict(X_test) accuracy = accuracy_score(y_test, predictions) print(f"Model Accuracy: {accuracy}") def save_model(model, filename='trained_model.joblib'): joblib.dump(model, filename) print(f"Model saved as {filename}") def main(): # Load and preprocess data data = load_data() processed_data = preprocess_data(data) # Split the data into training and testing sets X = processed_data.drop('target_column', axis=1) y = processed_data['target_column'] X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) # Train the model model = train_model(X_train, y_train) # Evaluate the model evaluate_model(model, X_test, y_test) # Save the model save_model(model) if __name__ == "__main__": main()predict_pipeline.py:import joblib import pandas as pd class ModelPredictor: def __init__(self, model_path): # Load the trained model from the given path self.model = joblib.load(model_path) def preprocess_input(self, input_data): # This method preprocesses the input data before prediction # Modify this method according to the preprocessing steps used during model training processed_data = pd.DataFrame(input_data, index=[0]) return processed_data def predict(self, input_data): # Preprocess the input data processed_data = self.preprocess_input(input_data) # Make a prediction prediction = self.model.predict(processed_data) return prediction def main(): # Example usage model_predictor = ModelPredictor('path/to/trained_model.joblib') # Example input data (this should match the format and feature set used during training) input_data = { "feature1": 0.5, "feature2": 1.5, # ... add other features as required } prediction = model_predictor.predict(input_data) print(f"Prediction: {prediction}") if __name__ == "__main__": main()

Setting Up Amazon Elastic Container Registry (ECR)

Dockerfile:FROM python:3.8-slim COPY . /app WORKDIR /app RUN pip install -r requirements.txt ENTRYPOINT ["./start.sh"]

Create an ECR Repository:

Using AWS Management Console:

Log in to the AWS Management Console:

Navigate to the AWS Management Console and log in.

Open the Amazon ECR Console:

In the AWS services search bar, type "ECR" and select "Elastic Container Registry".

Create a New Repository:

Click the “Create repository” button.

Enter a name for your repository. For instance,

my-ml-model.Configure other settings like image scan on push, tag immutability as per your requirements.

Click “Create repository”.

Using AWS CLI:

Create Repository:

Open your command line or terminal.

Run the following AWS CLI command:

aws ecr create-repository --repository-name my-ml-model

Once you've created your ECR repository, the next step is to authenticate your Docker client with it. This allows you to push and pull images to and from your repository.

Retrieve an Authentication Token:

AWS ECR uses a Docker CLI authentication token to authenticate your Docker client to your registry.

Run the following command to retrieve the token and authenticate automatically:

aws ecr get-login-password --region your-region | docker login --username AWS --password-stdin your-aws-account-id.dkr.ecr.your-region.amazonaws.comReplace

your-regionwith your AWS region andyour-aws-account-idwith your actual AWS account ID.

Verification:

After running the login command, you should see a message indicating a successful login to the ECR repository.

This means your Docker client is now authenticated and can push and pull images to and from your ECR repository.

AWS CodeBuild for Docker Image Creation

buildspec.yaml:version: 0.2 phases: pre_build: commands: - $(aws ecr get-login --region $AWS_DEFAULT_REGION --no-include-email) build: commands: - docker build -t my-ml-model . - docker tag my-ml-model:latest $AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/my-ml-model:latest post_build: commands: - docker push $AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/my-ml-model:latest

Deploying on Amazon Elastic Container Service (ECS)

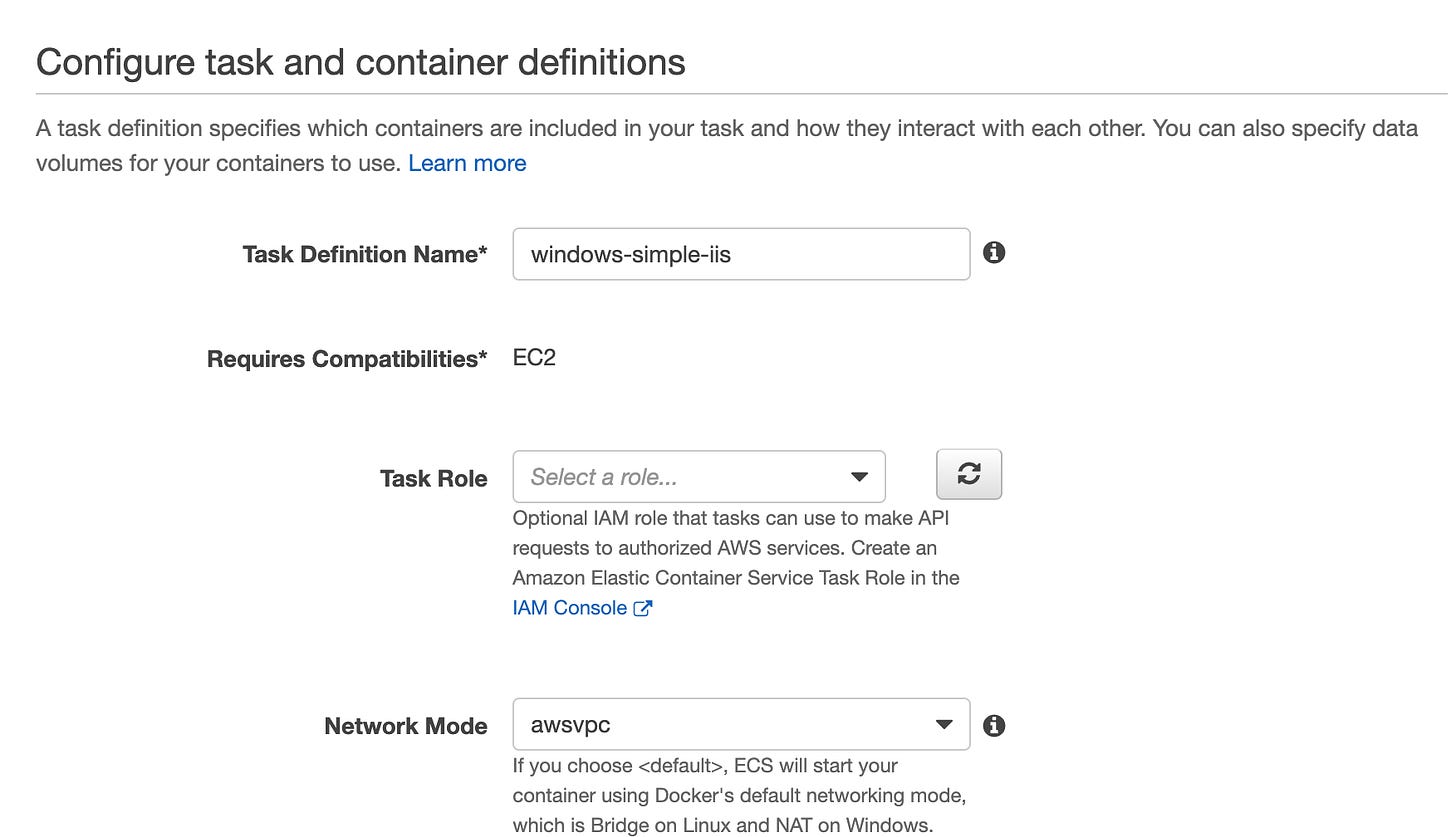

Creating an ECS Task Definition

A task definition is like a blueprint for your application. It tells ECS how to run a Docker container. This includes which Docker image to use, the required CPU and memory, environment variables, and other settings.

Navigate to the ECS Console:

Log in to the AWS Management Console and open the ECS service.

Create a New Task Definition:

Click on "Task Definitions" and then "Create new Task Definition".

Select launch type compatibility (Fargate or EC2).

Click "Next step".

Configure Task and Container Definitions:

Task Definition Name: Give your task a name, e.g.,

my-ml-task.Task Role: Select an IAM role that grants your task the required permissions.

Network Mode: Choose the network mode (bridge, host, or awsvpc).

Task Size: Specify the amount of CPU and memory.

Container Definitions: Click "Add container" to define your container.

Container Name: e.g.,

ml-container.Image: Specify the Docker image to use from ECR, e.g.,

123456789012.dkr.ecr.your-region.amazonaws.com/my-ml-model:latest.Memory Limits: Set the memory requirements.

Port Mappings: If your container serves HTTP requests, set the container port to 80 (or whichever port your app uses).

Click "Add" to add the container definition, then "Create" to finalize the task definition.

Creating an ECS Cluster

An ECS Cluster hosts your tasks and provides the resources they need to run.

Create a Cluster:

In the ECS console, go to "Clusters" and click "Create Cluster".

Choose a cluster template (for Fargate, select the "Networking only" template).

Click "Next step".

Configure Cluster:

Cluster Name: Give your cluster a name, e.g.,

my-ml-cluster.VPC and Subnets: Select the VPC and subnets where your tasks should run.

Security Group: Choose a security group or create a new one.

Click "Create" to create your cluster.

Creating a Service and Running Tasks

Services manage the running tasks in your cluster and ensure that the specified number of tasks are always running.

Create a Service:

In your cluster view, click on the "Services" tab and then "Create".

Service Configuration:

Launch Type: Choose either EC2 or Fargate.

Task Definition: Select the task definition you created earlier.

Service Name: e.g.,

my-ml-service.Number of Tasks: Define how many instances of your task you want to run.

Network Configuration: Configure the VPC, subnets, and security groups.

Load Balancing: Optionally, associate a load balancer with your service.

Click "Next step" and go through the subsequent screens to configure Auto Scaling (optional) and review.

Deploy the Service:

Click "Create Service". AWS will launch the specified number of task instances according to your task definition.

Your service will ensure that your tasks are healthy and restart them if they fail.

ECS Deployment Models

EC2 vs Fargate:

Choose between managing your own cluster of EC2 instances (EC2 launch type) or using serverless infrastructure (Fargate launch type).

Networking and Load Balancing

Setting Up a Virtual Private Cloud (VPC)

Create a VPC:

Purpose: A VPC provides a private, isolated section of the AWS Cloud where you can launch AWS resources.

AWS Management Console:

Navigate to the VPC Dashboard.

Click “Create VPC”.

Enter a "Name tag" for your VPC, like

MyVPC.Set the "IPv4 CIDR block", e.g.,

10.0.0.0/16.Leave other settings as default and click "Create".

VPC Considerations:

The CIDR block defines the IP address range of your VPC. It’s important to plan this according to the scale of your deployment.

The VPC will be the network backbone for your ECS tasks and other resources.

Subnets and Security Groups

Create Subnets:

Purpose: Subnets allow you to partition your network within your VPC.

In the VPC Dashboard, click "Subnets" and then "Create subnet".

Select your VPC, assign a name, choose an Availability Zone, and set a CIDR block, e.g.,

10.0.1.0/24.Repeat the process to create multiple subnets if necessary.

Create Security Groups:

Purpose: Security groups act as virtual firewalls for your instances to control inbound and outbound traffic.

In the EC2 Dashboard, click "Security Groups" and then "Create security group".

Name your group, provide a description, select your VPC, and configure the inbound and outbound rules as per your requirements.

Application Load Balancer (ALB)

Create an ALB:

Purpose: ALBs distribute incoming application traffic across multiple targets, like ECS tasks, in different Availability Zones.

Navigate to the EC2 Dashboard and click on "Load Balancers" then "Create Load Balancer".

Select "Application Load Balancer", set a name, and choose "internet-facing".

Select the VPC and the subnets you created earlier.

Configure security settings and create a new security group or select an existing one.

Define a Target Group:

In the Load Balancer setup, you will be prompted to configure a target group.

Click "Create target group".

Name the target group, choose "IP" as the target type, and select your VPC.

Specify a protocol and port your targets receive traffic on, typically HTTP/HTTPS and port 80/443.

Click "Create".

Associate Target Group with ECS Service:

Once you create your ECS service, you can associate it with this target group. This allows the load balancer to route traffic to your ECS tasks.

CI/CD with AWS CodePipeline

Step 1: Define Source Repository

Choose Source Provider:

In the AWS Management Console, navigate to the CodePipeline service.

Click "Create pipeline".

Set a pipeline name, like

MLModelPipeline.For the source stage, select your source provider (e.g., AWS CodeCommit, GitHub, S3).

Connect to the repository where your ML model code is stored.

Source Repository Configuration:

Select the specific repository and branch containing your ML application (e.g., GitHub repo,

mainbranch).This stage triggers the pipeline whenever changes are pushed to the specified branch.

Step 2: Set Up Build Stage with AWS CodeBuild

Create a Build Project:

In the build stage, select "AWS CodeBuild" and choose "Create project".

Provide a name for the build project, like

MLModelBuild.Choose an operating system, runtime, and build environment for your build project.

Buildspec Configuration:

Define build commands and settings in a

buildspec.ymlfile in your repository or enter build commands directly.The build stage typically includes commands for installing dependencies, running tests, and building Docker images.

Integrate with ECR:

If you're using Docker, configure CodeBuild to push the built Docker image to ECR.

Ensure the build project's service role has the necessary permissions to access ECR and ECS.

Step 3: Deployment Stage Configuration

Deploy to ECS:

For the deployment stage, select "Amazon ECS".

Choose the ECS service and cluster you have previously set up.

This stage will deploy the built Docker image as a new task in your ECS service.

Deployment Configuration:

Specify details such as the container name and container port if required.

Define any additional deployment actions, like running database migrations or post-deployment tests.

Click "Create pipeline" to finalize and launch your CI/CD pipeline.

Example AWS CodePipeline Setup

codepipeline.yml:Resources: MyPipeline: Type: AWS::CodePipeline::Pipeline Properties: # Pipeline configuration goes here

4 - How to Sell this project (to a hiring manager)

If the end goal is to get a job or sell that you have experience with MLOps, you need to be able to sell this project. Looking at this project you essentially made an open source model ‘come alive’. This is by exposing it as an API and making it available for consumption.

You use ECS or EKS to take advantage of server less compute. This means that the cloud service provider is taking care of the backend infrastructure management. You can now focus on creating value with the machine learning model.

We are using containers to align with cloud native design. Using containers creates a portable and isolated environment for your application. It facilitates a microservices based architecture. This is the current trend in software architecture.

We chose to use an ALB to distribute load between your containers. In a modern microservices based architecture horizontally scaling is the preferred choice. Using an ALB allows you to distribute your incoming requests to your containers. It does this in a way that makes sure no single container is overloaded.

Note: If you have a hardo interviewer the actual algorithm is round robin. NLBs are flow hash.

We use CI/CD tools like CodeBuild and CodePipeline to remove manual errors and processes. In industry common tools like Jenkins, GitHub Actions, and GitLab all fit into this space. CI/CD creates repeatable automated processes. They deliver software in a controlled and error free way.

CodeBuild does your build and compilation processes and CodePipeline orchestrates your CI/CD.

The biggest question you would get is how would you productionalize this?

You would say something like:

Ensure deployment fits any networking constructs the company has in place. Meaning I would pick the right AWS account with customer facing connectivity and any other constructs the company has.

Choose a serverless option like EKS or ECS in fargate mode to have AWS manage the infrastructure. Now I can focus on creating value with our ML models.

Properly scope the subnets and security groups. Ensure only connectivity needed is given. Only take the amount of IPs that are needed plus some buffer space.

Make sure to scope the IAM roles following least privilege. Only give the roles the permissions they need.

I would ensure to add cloudwatch alarms. I do this to ensure the availability and high performance of the application. Typically, a slack bot integration and another integration to an incident management service is good.

I would also ensure we have proper autoscaling rules defined for EKS or ECS. This is to horizontally scale out the service and meet demand. This also ensures we are not overprovisioned during off hours.

Finally, I would create basic unit, build, and regression tests. This is to align with our CI/CD suite. This ensures thoroughly tested software gets delivered mostly bug free.

If you say something like that – I would smash the hire button. This shows you understand the bigger picture and have actually done this before. That, or you at least thought about how to productionalize your models.

I hope you all make something of this project and add something that pops to your resume.

Checkout the rest of my writings at BowTiedCelt.substack.com. Be sure to check out the other posts I’ve written for Raptor, including a series on Airflow:

https://bowtiedraptor.substack.com/p/mlops-part-3-apache-airflow

![AWS Fargate] New Platform Version | クラウド・AWSのIT技術者向けブログ SKYARCH BROADCASTING AWS Fargate] New Platform Version | クラウド・AWSのIT技術者向けブログ SKYARCH BROADCASTING](https://substackcdn.com/image/fetch/$s_!u8Re!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F023dc834-a809-496b-8ace-e0d794edddd7_379x191.png)