Python Data Skills 10: Data Cleansing Essentials

Cleaning String Series Data, Cleaning Dates, Cleaning Missing Data, Cleaning Data with KNN

Let’s get to it.

Table of Contents

Evaluating & Cleaning String Series Data

Working With Dates

Identifying & Cleansing Missing Data

Missing Value Imputation with Data Cleansing

1 - Evaluating & Cleaning String Series Data

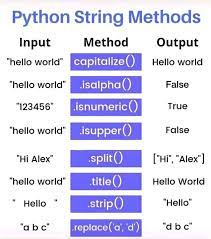

When working with text data, it is often necessary to check and clean the data to make it suitable for analysis. Pandas provides several powerful methods for string manipulation. This enables easy and efficient cleaning of text data.

1.1 Using Contains

You use the contains method to filter data. Check if a certain substring is present within each element of the Series. It returns a Boolean mask for use in further operations.

import pandas as pd

s = pd.Series(['apple', 'banana', 'cherry'])

mask = s.str.contains('apple')Here, mask will be a Boolean series. Mask indicates whether each element in s contains the substring 'apple'. This method is very useful for filtering data based on specific text patterns.

1.2 Using endswith

The endswith method checks if each string in the Series ends with a specified substring. Like contains, it returns a Boolean mask.

mask = s.str.endswith('na')In this example, mask will show whether each element in s ends with 'na'. This can be particularly helpful in categorizing data based on suffix patterns. It can also be helpful in filtering data based on suffix patterns.

1.3 Using findall

The findall method returns a Series of lists containing all occurrences of a pattern. It uses regular expressions, offering powerful pattern matching capabilities.

result = s.str.findall(r'\ba\w*\b')Here, result will contain all words in the strings of s that begin with the letter 'a'. The use of regular expressions with findall allows for sophisticated text search. This is present in this Series

1.4 Creating a Function for Processing String Values

For more complex string processing, you may need to define a custom function. You can apply this function to each element in the Series using the apply method. Finally, you can use the replace method for simpler processing.

def process_string(value):

value = value.replace('apple', 'orange')

# Additional processing

return value

s_new = s.apply(process_string)In the above code, we created a function process_string. This function replaces 'apple' with 'orange' and includes other processing steps. By using apply, we create a new Series s_new with the processed strings.

Combining the use of a custom function with replace offers great flexibility. This allows you to perform even the most complex string manipulations with ease.

2 - Working With Dates

Working with dates is a common task in data analysis. This is an especially common task in time-series analysis. Pandas provides a wide array of tools to handle dates. These tools make it easier to manipulate and analyze date information. Here's how you can perform essential tasks related to dates in Pandas.

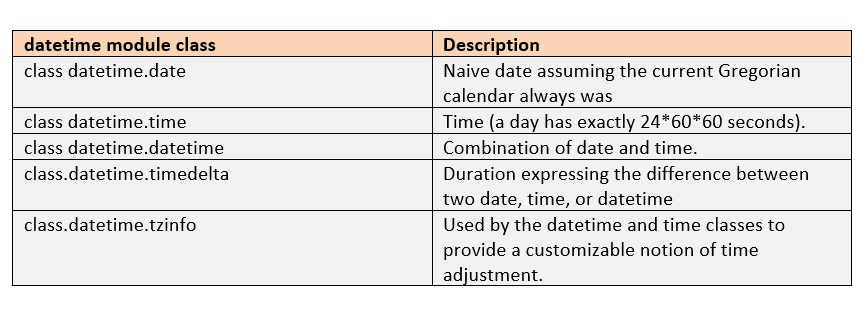

2.1 Datetime Module

The datetime module in Python provides functions and classes. These functions and classes work to manipulate dates and times. It is often used in conjunction with Pandas to handle date-related tasks.

import pandas as pd

from datetime import datetime

# Creating a datetime object

dt = datetime(2023, 7, 22)

# Creating a pandas Series with datetime objects

s = pd.Series([dt, datetime(2023, 5, 15)])You can also use functions like pd.to_datetime. This function converts strings into datetime objects, allowing you to work with dates.

2.2 Confirm that the data has valid date values

When working with dates in a dataset, you must ensure that the dates are valid. You can use the pd.to_datetime function with the errors parameter. This handles errors in date conversion.

# Assuming 'date_column' is a column with date strings

dates = pd.to_datetime(df['date_column'], errors='coerce')Using errors='coerce' will replace any invalid dates with NaT, (Not a Timestamp). This will allow you to identify and handle them.

2.3 Using fillna to replace missing data

You can replace missing or incorrect dates using the fillna method. This method is particularly useful for filling in gaps in a time series.

# Replacing NaT with a specific date

dates_filled = dates.fillna(datetime(2000, 1, 1))

# Using forward fill to replace NaT with the previous valid date

dates_filled_ffill = dates.fillna(method='ffill')In the above example, the first code snippet replaces all NaT values with a specific date. The second one uses the previous valid date to fill the missing values. You can choose the method that best suits the nature of your data and analysis requirements.

3 - Identifying & Cleansing Missing Data

Handling missing data is a complex issue in data preprocessing and analysis. Identifying and cleansing missing data is crucial. Missing or incomplete data can introduce biases and reduce statistical power. It can also skew distributions, and lead to misleading or invalid conclusions.

3.1 Num of Missing Values for Each Column

Before addressing missing data, you need to understand where and how much data is missing. Pandas' isnull method, followed by sum, provides a count of missing values for each column.

# Check for missing values in each column

missing_values = df.isnull().sum()

print(missing_values)This step helps us understand the distribution of missing values across different columns. Knowing this information is helpful. Use this to help you determine the best method to fill or drop these values. based on the type and importance of each feature.

3.2 Remove rows where nearly all data is missing

In certain instances, specific rows may contain a majority of missing values. These rows might be more noise than signal, and removing them may improve the quality of your dataset.

# Determine a threshold to drop rows with excessive missing values

threshold = 0.8

# Drop rows where more than 80% of values are missing

df_dropped = df.dropna(thresh=int(df.shape[1] * threshold))It is important to adjust the threshold based on the data and domain knowledge. This is because it can make this cleaning step more effective. Removing rows with large missing values ensures a good quality of data. It ensures that the remaining data is cohesive and reliable for analysis.

3.3 Using mean to replace missing values

When dealing with missing numerical values, replacing them is an option. A replacement with the mean of the existing values is a common approach. This method helps preserve the overall statistical properties of the dataset.

# Replace missing values with the mean of the respective column

df_filled_mean = df.fillna(df.mean())If missing values are not randomly distributed, you should not replace them with the mean. This is because doing such might introduce bias. Consider the context and nature of the missing data before using this approach.

3.4 Use forward fill to replace missing values

Forward fill fills each missing value with the preceding non-missing value. This can be particularly useful for ordered data such as time-series.

# Use the previous value to fill missing values (especially useful for time-series data)

df_filled_ffill = df.fillna(method='ffill')Ensuring correct data sorting is essential before using forward fill. This is to preserve the inherent relationships in the data.

3.5 Fill missing values with the mean by group

It may be more meaningful to replace missing values with the mean value of a specific subgroup. This is when using datasets with different categories or groups.

# Group by a specific category and fill missing values with the mean of each group

df_filled_group_mean = df.groupby('category').apply(lambda x: x.fillna(x.mean()))This method respects the inherent grouping within the data. This maintains relationships between different subgroups. Additionally, this method provides a more nuanced treatment of missing values.

4 - Missing value imputation with KNN

From this post, you should already know how to spot your outliers with KNN.

4.1 KNN Algorithm

The k-Nearest Neighbor algorithm is primarily known as a classification and regression technique. I It operates by finding the "k" closest data points (neighbors) to a given observation. It then makes predictions based on the values of these neighbors.

Distance Measure: KNN calculates the distance between data points using a distance metric like Euclidean distance.

Choosing Neighbors: The algorithm identifies the "k" nearest data points to the observation with missing values.

Making Predictions: For classification, the majority class among the neighbors is chosen. For regression or imputation, the mean or weighted mean of the neighbors is calculated.

4.2 Why You Should Use KNN to Clean Your Data

Non-Parametric Method: KNN doesn't make any assumptions about the distribution of the underlying data, making it flexible.

Utilizes Local Information: By considering only the "k" nearest data points, KNN leverages local information which can be very effective when missing values are not randomly distributed.

Suitable for Different Data Types: KNN can be used for both categorical and numerical variables, making it versatile.

KNN can be computationally expensive. Especially with large datasets, as it requires distance calculation with every other point.

4.3 How You Can Use KNN to Clean Your Data

Using KNN for missing value imputation involves this step. Treat the variable with missing values as the target variable. Other variables as features to predict and fill the missing values. Here's a step-by-step guide to using KNN for imputing missing values.

Preprocess the Data: Remove rows with missing target variables and handle other missing values as required.

Scale the Features: Since KNN relies on distance measures, it's crucial to scale the features so that no variable dominates due to its scale.

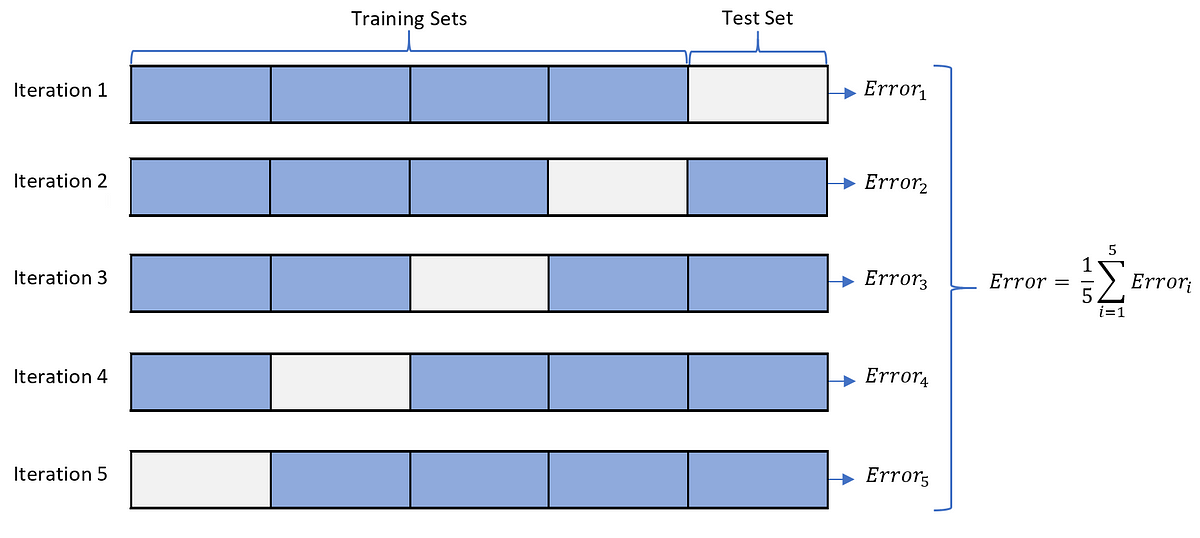

Choose the Right 'k': The choice of "k" depends on the data. A smaller "k" might be sensitive to noise, while a larger "k" might smooth out relevant local structures.

Apply KNN Imputer: Using libraries like

scikit-learn, you can apply KNN Imputation.

from sklearn.impute import KNNImputer

# Create the imputer

knn_imputer = KNNImputer(n_neighbors=5)

# Apply the imputer

df_imputed = knn_imputer.fit_transform(df)Note: You should make sure you do a quick cross validation test to make sure you are using the correct number of neighbors.

Very useful, thank you!