Python Data Skills 5 - Inspecting Your Data

Structure of your dataframe, 5 number summary, missing value distribution, duplicates, initial insights

If you’ve read the past 4 Python data skills posts, then you have a solid idea on how to load any data in pandas dataframe. You also know the list of things that can go wrong, and how to handle those problems.

Now that we have a dataframe in front of us, the first thing we’ll do with it is answer the most asked question:

“So, how does it look?”

Note, in this section we are not doing any analysis. We’re opening up the dataframe, and doing a quick inspection of it, and putting it in a useful state.

Table of Contents

Structure of The DataFrame

Five Number Summary

Missing Value Distribution

Duplicates

Initial Insights

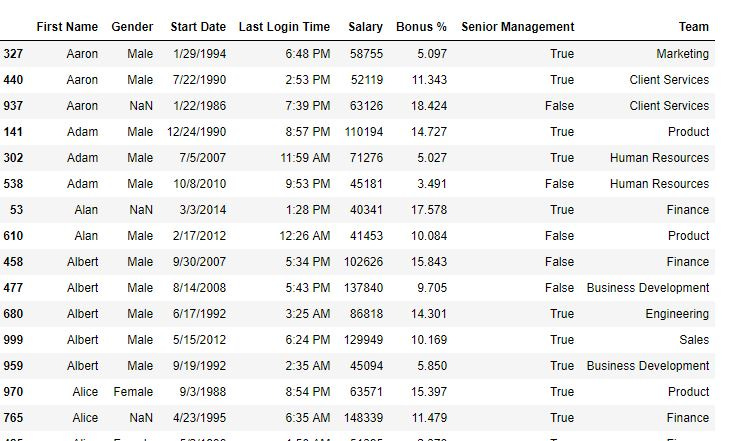

1 - Structure of the dataframe

Understanding the structure of your DataFrame is crucial. You need to know three things:

Cardinality of your dataset: number of rows & columns

Names of your features (columns)

Data type of each feature

Here’s a code snippet, assuming df is your dataframe.

# Let's assume df is your DataFrame

print("Cardinality:", df.shape)

print("Columns:", df.columns)

print("Data Types:", df.dtypes)A common error is the implicit type coercion of pandas. Sometimes, a column may contain mixed data types, and pandas may convert all data to a less precise type (e.g., object). This can result in precision loss or failed mathematical operations. The onus is on you to ensure each column's data type consistency. Here are 2 of the most common problems you’ll encounter

Numerical values stored as strings

Date stored as strings

2 - Five Number Summary

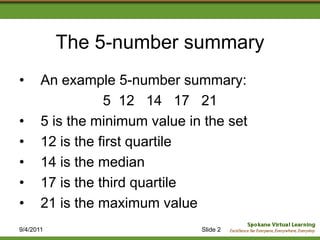

The five-number summary provides a concise statistical snapshot of your data. It encapsulates the smallest, first quartile (Q1 or 25th percentile). Median (Q2 or 50th percentile). Third quartile (Q3 or 75th percentile), and the largest of your dataset. You get this using the .describe() method in pandas.

You can read more about the 5 number summary here.

print(df.describe())2.1 Understanding the summary

From these five numbers, you can understand a lot about your data:

Smallest and Highest: The range of your data. High or low values might state outliers or errors in your data.

Quartiles: The quartiles break your data into four equal parts. Q1 is the "middle" value in the first half, Q2 is the median, and Q3 is the "middle" value of the second half of your data.

Interquartile Range (IQR): The range between Q1 and Q3. It gives an idea about the spread of the middle 50% of your data, thus, is not affected by potential outliers.

2.2 Limitations

Remember that the five-number summary provide valuable insights. It's crucial to remember that this is an assumption of a symmetric (or normal) distribution. These statistics may offer a distorted picture of your data. This is in the presence of skewed data or outliers.

For instance, consider a variable representing income. Income distributions are often right-skewed. This means many people earn small-to-median incomes, but a few earn high incomes. In such a case, extreme values can cause inflation in the mean and standard deviation. This is not accurate reflecting the typical income. The median and IQR, needs are less affected by outliers. This provides a more accurate picture of the central tendency and spread.

3 - Missing value distribution

3.1 Missing Values

Use the .isnull().sum() method to count the number of missing values in each column of a DataFrame. Do this in Python's pandas. It provides an overview of the amount of data is missing.

This is essential in deciding how to handle these missing values.

print(df.isnull().sum())Missing data can introduce a range of issues in your analysis:

Computational issues: Some algorithms cannot work with missing data.

Bias and Variability: Not handling missing values can lead to biased results. It can also lead to incorrect results. This reduces variability in your data.

3.2 Different Types of Missing Values

Understanding the reason behind the missingness is vital. Do this before deciding on the imputation strategy. There are generally three types of missing data:

Missing Completely At Random (MCAR): The fact that the data is missing is not related to any other values.

Missing at Random (MAR): There is no relationship with missing data. There's a systematic relationship between the missingness and other observed data.

Missing Not At Random (MNAR): The missingness relates to the missing values itself.

We will cover the methods (in Python), you can use to handle missing data in a different post.

4 - Duplicates

4.1 Understanding Duplicates

Duplicates in a dataset are rows that repeat exactly or partly. This redundancy can occur due to various reasons. One, from errors during data collection. Two, data merging. Three, due to the inherent characteristics. Detecting and understanding duplicates is an essential step in data preprocessing.

print(df.duplicated().sum())Duplicates can significantly skew the analysis and the machine learning model's performance. They can:

Artificially Inflate Data: Duplicates can increase the size of the dataset. This means it can distort the statistics and influence any derived insights.

Bias Models: If duplicates can lead to a model that's biased towards that class. This can appen if they are more common in a specific class in a classification problem.

Mislead Understanding: Repeated rows can create non-existent patterns in the data. This means it can be misleading during exploratory analysis.

4.2 Handling Duplicates

You should get rid of these repeated rows with the method .drop_duplicates().

df = df.drop_duplicates()Do not discard your duplicates (especially in this section) without looking at it:

Dropping duplicates can sometimes remove valuable information. It's crucial first to understand why duplicates are present. If the duplicates are due to a data collection error or a data merging error, it's safe to remove them. But, in some cases, duplicates represent valid repeated observations. For example, in a sales dataset, a duplicate row might represent more than one sales of the same product.

One way to check this is to see if the dataset contains a unique identifier for each row. This is like a transaction id in a sales dataset. If it does, you can remove duplicates with the same identifier.

Further, in time series data, duplicates might signify the frequency of an event. So removing them might result in loss of this information. The nature of the data and the context should guide how to handle duplicates.

Remember, the key is to understand the reason for duplicates in your data. This must happen before deciding on the method to handle them. It is always good practice to document these steps. It increases the reproducibility of your analysis.

5 - Initial insights

Exploratory Data Analysis (EDA) can provide initial insights into your data. For instance, df.corr() provides pairwise correlations between numerical features. You can see this when using a heatmap.

import seaborn as sns

correlation_matrix = df.corr()

sns.heatmap(correlation_matrix, annot=True)A common mistake is to misinterpret correlation as causation. This can also mean to overlook that correlation is a linear measure. You can miss out on nonlinear relationships. Another point to consider is that Pearson's correlation (default in pandas). It is sensitive to outliers and non-normality. For non-normal data, consider Spearman's or Kendall's correlation.