Bayesian Linear Regression

Posterior Distributions, Conditional Probability, Linear Regression, and how all of this comes together in order to make something called a Bayesian Linear Regression

Table of Contents

Frequently Asked Questions (FAQ)

Implementation of Bayesian linear regression in R and Python

Posterior distribution

Linear Regression

Model Parameters

Bayesian linear regression

If you like this content, and want to keep up with more content that’s coming down the pipeline, use the subscribe button below.

Frequently Asked Questions (FAQ)

1. What do you mean by Bayesian linear regression model?

Bayesian linear regression is a type of regression analysis that combines the Bayesian approach to probability with the linear models.

The Bayesian approach involves using the posterior distribution for the model parameters in order to calculate the uncertainty in that parameter. The linear model is a mathematical model that describes the relationship between two or more variables in terms of a straight line.

Bayesian linear regression allows us to combine these two approaches and to estimate the parameters of a linear model while taking into account the uncertainty in those estimates. This can be helpful, for example, when trying to determine how likely it is that one variable is influenced by another variable.

2. What is Bayesian regression used for?

Bayesian regression is a statistical technique used to estimate the parameters of a model. In Bayesian statistics, the distributions of the parameters is assumed to be a probability distributions, which allows for the calculation of probabilities for various values of the parameters. This approach can be used for both simple and complex models, and can be applied to data sets of any size.

One example of where Bayesian regression could be used is in predicting the price of a house based on its size, location, and other features. The model would take into account the variability in house prices, and would produce a probability distributions for the price of a given house. This information could then be used by buyers and sellers to Negotiate prices more effectively.

3. What is Bayesian modelling?

Bayesian modelling is a type of data analysis that allows you to combine your prior belief (posterior distribution) with the evidence you collect as you go along. This gives you a more accurate estimate of the likelihood of the data (different outcomes occurring) than you would get if you just used your prior beliefs.

Bayesian modelling is often used in machine learning and data science. It is typically used to improve the accuracy of predictions by taking into account the uncertainty in those predictions. It can also be used for predictive maintenance, where it's used to figure out which machines are most likely to fail in the future so that preventive maintenance can be scheduled.

4. Is Bayesian linear regression better than linear regression (ols)?

I wish it was a simple yes or no. Unfortunately, it depends on the specific problem that you are trying to solve. OLS stands for ordinary least squares, this is the name of the technique used to solve the linear regression mathematical problem.

Bayesian regression is a type of probabilistic regression, while linear regression (ols) is a type of parametric regression. Bayesian regression can be more accurate than linear regression in some cases, but it can also be more complex and difficult to use. In general, Bayesian regression is most useful for problems that are too complex for linear regression or when you want to include prior information about the relationship between the predictor variables and the response variable in your analysis.

Another important thing to emphasize is the fact that linear regression has an enforced assumption of normal distribution on it's error terms, while Bayesian linear model does not.

5. How does Bayesian linear regression work?

In Bayesian linear regression, we are interested in estimating the parameters of a linear regression model. We do this by using a posterior distribution, which is updated as we get more data.

The posterior distribution is constructed using Bayes' rule. First, we specify a prior distribution for the parameters of the linear model. This prior can be any distribution that we think might be reasonable (e.g., a normal distribution, uniform, or any of these ones). Then, as we observe data, we update the prior beliefs about the parameters using Bayes' rule:

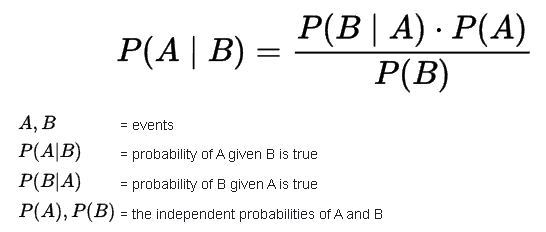

Here is the Bayes rule:

And here is how the Bayes rule is tweaked for our regression:

You can learn more about the application of the Bayes Rule from BowTiedBettor .

Implementation of Bayesian linear regression in R & Python

You can find the raw code for this here.

R

In order to do this in R, we will load up our data, and then throw out a simple posterior distribution, and we'll just have the model give us some parameter that we can examine and assess. In order to do the analysis on our data, we will use the stats() library for the function, and we will use the rstanarm library too.

Loading our Data

As usual, we'll use the data.table library to load up our data because it is much faster than the default read.csv function.

Train/Test Split

To keep life simple, we'll just do a 50-50 split on the data. 50% goes to training, and 50% goes to testing. We'll also kick out all of the qualitative columns, and only keep the quantative ones.

Running Bayesian Analysis & Examining our likelihood estimate

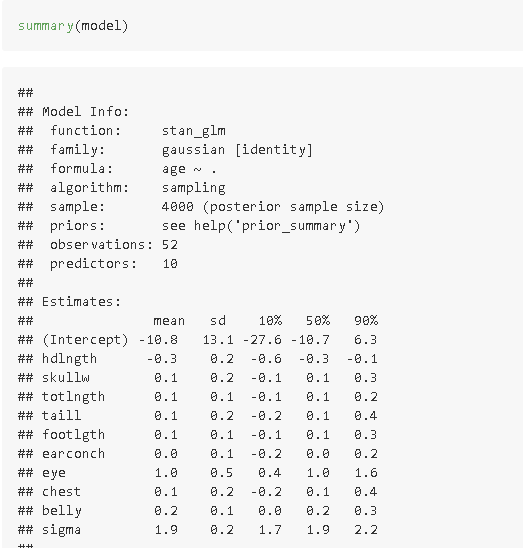

For the first run, I'll just chuck a simple gaussian distribution into it, and see what comes up.

We now saved the gaussian function into the model as a variable called model. Now let's assess the parameter from this model. Also, if you remember the notes for assessing a model's quality from the ANOVA tables, this applies here.

Now, let's just chuck this into the predictions and see how it looks like.

Overall, gaussian is pretty bad for this, so let's try out another one. This image below shows you all of the families you can call upon for this model. This one just goes into the parameter for the code itself when you use it. Just make the formatting similar to how you see up above.

Trying & Assessing different Posterior distributions

Now, let's try out one of the other families into this, and see how this performs. I'll chuck the poisson family at it. All you would have to do is go to the up above code, and just swap out normal() for poisson().

These were the parameter values for this family:

These were the prediction values by using this family.

As you can see the predictions were pretty bad. Not surprising since the poisson function is not meant to be used for these type of problems, it's just meant more for things like calculating average within a certain time period. But decent practice at this though.

Python

We will use the linear_model from the sklearn library in order to pull the posterior function we need in order to run this in Python. We will also use the sklearn library for the data wrangling.

Loading our Data

Let's do a quick load of our data values and see what we are working with here.

Train/Test Split

Now, let's do a simple 50-50 split on the training and testing data. We will also kick out the qualitative columns, and keep only the quantitative ones.

Running Bayesian Analysis & Examining our likelihood estimate

Now, let's start off by doing a simple bayesian ridge model. The code for this is below. We'll just train this model onto our training set.

Now, let's do a quick snapshot at the predictions that came out of this one.

Trying & Assessing different Posterior distributions

Another one from the sklearn library you can use is called the Bayesian ARD. In order to use it, just swap out BayesianRidge for ARDRegression. Now let's take a look at how that one performs.

Posterior distribution

Conditional Probability

Posterior distribution is just a fancy way of saying conditional probability. This is a measure of the probability of an event occurring, given that another event has already occurred. In other words, it's a way of quantifying the relationship between two events.

For example, let's say you have a deck of cards and you want to know the probability of getting the number 7, given that you've already drawn a club. The conditional probability would be calculated as follows:

P(Number 7|Club) = P(Number 7 and Club)/P(Club)

where P(Club) is the overall probability of getting a club from the deck (irrespective of everything else), and P(Number 7|Club) is the conditional probability of getting the number 7, given that you've already drawn a Club, and lastly P(Number 7 and Club) is the probability of drawing the number 7 and the club.

Here is how the calculation would work:

Normal way

A normal deck of cards has 52 cards. Only one of them is the Number 7 of Clubs. We also know that there are 13 cards which are clubs. From the above, we are given that we have drawn a club. This means our denominator for the fraction is the number 13. Now based off of this, what is the posterior probability that we end up getting the number 7?

Well, there is only 1 Number 7 of Clubs. So the answer is 1/13

Conditional Probability Formula way

We can just go straight to the formula.

What is the P(Number 7 and clubs): 1/52

What is the P(drawing a clubs): 1/4.

Now apply the formula:

Same answer as from the above approach.

Bayes Rule

The Bayes Rule is a mathematical formula used to calculate the conditional probability of an event, based on the prior probability of that event and the likelihood of that event occurring given some other information. In other words, it helps to determine how likely something is to happen, after taking into account all relevant information. Here is the mathematical Bayes Rule

For those of you who have studied actuarial sciences, you know exactly why the numerator can indeed be changed like that. For those not studying it yet, here is a solid video on the proof of why this works:

Bayesian Methods

Bayesian methods are a branch of statistics that deals with the interpretation of data as it relates to prior knowledge. In other words, it takes into account what we know about a situation in order to make better predictions about the likelihood of something occurring.

This makes them especially useful for dealing with uncertainty, since it takes into account our level of confidence in our current knowledge. This can be extremely helpful in real-world situations where we often don't have all the information we need to make a definitive conclusion.

Bayesian methods are particularly well-suited for problems where the data is uncertain and/or there is prior knowledge about the problem that can be incorporated into the analysis. They have been used in a variety of fields, including medical diagnosis, natural language processing, machine learning, and data science.

Linear regression

Recall from this post that the linear model is simply a way to see if we can connect the several explanatory variables (Xs) together in some sort of a linear combinations in order to predict some sort of a response variable (Ys). Here is the equation for it:

You can even follow up this section with a quick recap on log linear models.

Model parameters

Model parameters are essentially the settings or options that can be adjusted in order to optimize a machine learning model. In the Bayesian approach, these parameters can be:

Prior beliefs about how likely certain parameters values are

How much weight to give to new data points when updating those beliefs

How much uncertainty there is in the data itself

How to skew data points, based on knowledge of the prior distribution

In normal regression (frequentist approach), the assumption is you already know your data's distribution, and you just want to know how much weight to put for the parameters. In the Bayesian approach, we want to figure out what the data's distribution corresponds to (which we will have to try and play around with), and then we can calculate the parameters for it. In other words, with the Bayesian approach, we have some sort of a prior belief which makes us want to skew the model parameters in one specific way.

Another major difference between the frequentist approach (classical approach) is that anytime some new data enters the model, in the classical frequentist method, you have to re-train the entire machine learning model on all of the data point, whereas in the bayes approach, you already have your beliefs ready to go, you just merely tweak the model parameter based off the new data point slightly.

Bayesian Linear regression

Remember, in linear regression, we are automatically assuming the data follows a normal distribution, and we are interested in finding the single best solution for the ols problem.

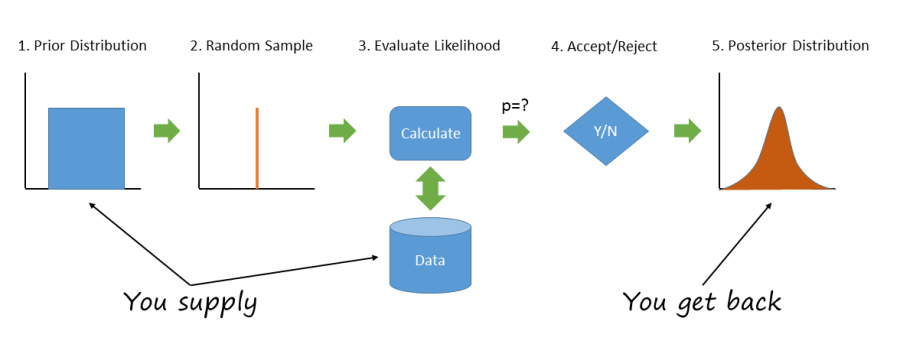

In the Bayesian linear model, we are interested in finding the best overall distribution for the model, and also the best model parameters for that said distribution. In order to implement the Bayesian model for our training data, here is what we have to do:

First we need to specify the prior distribution (basically what you think the distribution is)

Once we are done that, then we will need to create a simple mapping (linear model) that showcases how the X random variables can be connected with each other to predict the Y random variable.

Based off of this, we will then get the model parameters based on our prior distribution, and the number of data points we feed to our model.

Then, as usual, once we have the model parameters we can do a simple k-fold cross validation to assess whether these are good or not.

Bayes <3.